Key Takeaways

-

PixVerse AI stands out because it is tuned for believable motion, grounded product behavior, and lifestyle scenes that hold up in actual brand work. That makes it more useful for marketers than models that look impressive in demos but break under scrutiny.

-

For brand teams, the real value is not just faster generation. It is the ability to turn one still, one presenter video, or one brand character into multiple production-ready variations without rebuilding the asset from scratch.

-

PixVerse is especially strong for image to video ai workflows, localized presenter videos, and AI product video creation where realism matters more than cinematic spectacle. That gives it a clear role in a serious content stack.

-

Native audio, lipsync, and motion transfer make PixVerse operationally useful, not just creatively interesting. These features shorten the path from concept to publishable asset.

-

Inside invideo, PixVerse becomes more powerful because it sits alongside other leading models in one workflow. Teams can generate with the right model for each shot, then edit, brand, and export in the same workspace.

AI fixed one part of brand videos very quickly. It made motion cheaper and faster.

What it did not fix is the part that matters most once the video is actually attached to a brand. AI video still falls apart under normal scrutiny. The product bends when it should stay rigid. The splash looks synthetic. The hand looks off for half a second. The model looks fine in a demo and suddenly unusable once you imagine it running as a paid ad.

That gap matters.

For a meme account, a weird frame can be okay. For a brand, it is a credibility hit.

This is where PixVerse stands out.

It is not the most cinematic model in every comparison. Its strength is different.

PixVerse is good at the kind of motion brands actually need: grounded product behavior, believable lifestyle footage, and presenter-driven clips that feel closer to usable assets than experimental output.

That is what makes it interesting. And that is what this blog is really about:

-

What PixVerse AI is?

-

What it does well?

-

Where it fits?

-

How brand teams can actually use it inside invideo.

What PixVerse Actually Is?

PixVerse is a Singapore-based AI video company founded in 2023. It reached unicorn status in March 2026 and is used by more than 100 million creators across 175 countries.

That is a useful context, but the bigger point is simpler. PixVerse is not a novelty solution. It is a serious commercial platform with real scale behind it.

From a workflow point of view, PixVerse generates short videos from text prompts, still images, or both. Its flagship model supports 1080p output, 15-second single-pass generations, multi-shot sequences, and integrated audio. It is also known for camera control, character performance, physics interactions, and realistic motion.

That last part is the one that matters most here.

A lot of AI video tools are good at producing something eye-catching. PixVerse is more interesting because it is better at producing something usable. If you are working on product visuals, short lifestyle scenes, social-first campaigns, or localized presenter content, that difference is not subtle. It is the difference between a clip that gets saved to a moodboard and a clip that actually gets shipped.

That is the practical way to think about PixVerse AI. Not as a general-purpose video engine, but as a model with a very clear strength in believable branded motion.

Why Brand Teams Should Care

If you are on a brand or marketing team, realistic motion is not a nice-to-have. It is essential.

That is true for obvious categories like beauty, fashion, food, and cinematics. It is also true for SaaS, apps, and B2B brands that rely on presenter videos, feature explainers, and social content. Once something looks slightly fake, the viewer stops processing the message and starts noticing the flaw.

PixVerse is tuned towards physical believability. Products hold together better. Lifestyle footage feels more grounded. Motion feels closer to what a brand team would actually approve.

The second reason is speed, but not in the usual sense of “make content faster.” The more useful framing is that PixVerse shortens the path from concept to usable asset.

Because it supports native audio, you are not always generating a silent visual and then building the sound layer separately. Dialogue, ambience, and effects can come through in the same pass. For short-form brand work, that matters. It cuts down the amount of stitching required before the asset is even ready for review.

Here is a good example of that in practice. This scene carries a lot of visual and audio complexity, but it was generated in PixVerse from a short two-sentence prompt:

Then there is variation, which is where the real operating leverage shows up.

One presenter video can be localized into multiple language versions with lipsync. One brand character can perform different gestures using motion transfer. One product still can become several social assets instead of one static visual.

Flashy features? Yes. But they significantly change how efficiently a team can produce campaign volume.

That is the real reason to look at PixVerse AI. It makes believable brand variation cheaper.

What PixVerse Can Do

The easiest way to understand PixVerse is to look at the jobs it can take off a brand team’s plate.

1. Text-to-video

If you want to start from a brief, PixVerse can generate a branded scene directly from text.

This works best when the prompt is clear about the action first, then builds in lighting, mood, and camera direction. It is useful for early concept development, social tests, and first-pass creative directions where speed matters but the result still needs to feel grounded.

2. Image-to-video AI

This is probably the most practical workflow for many teams. You already have the still. It might be a product photo, a campaign visual, a packshot, or a brand character. PixVerse turns that into motion while staying close to the original composition and lighting. You are not inventing the asset from scratch. You are extending something the brand already owns.

3. Multi-shot sequences

PixVerse can also generate connected shots inside one sequence. That makes it useful for short promos, teaser edits, and small brand narratives that need more than one angle but do not need a full shoot. The value here is not just visual continuity. It is that the asset starts to feel more like a real campaign component and less like an isolated AI experiment.

4. Native audio

This one matters more than it sounds. Audio comes with the visual, which means the output is closer to ready the moment it renders. For social teams and lean marketing teams, that reduction in post work is a big deal.

5. Lipsync

For marketers, lipsync is best understood as a localization tool. You create one presenter-led asset, then adapt the spoken track for different markets while keeping the visual identity consistent. Same framing, same lighting, same person, different audience. That is a much more useful framing than treating it like a novelty talking-head feature.

6. Motion transfer

Motion transfer is where PixVerse gets especially interesting for brand characters and product storytelling. You take a still image and a driving video, then map the movement from the source clip onto the image subject. That lets teams build repeatable performance without rebuilding every asset from zero.

Put all of that together and the value becomes pretty clear. PixVerse lowers the production threshold for AI product video and branded motion content. The input is a brief and a strong asset. Not a full crew.

When To Use PixVerse, And When To Use Something Else

PixVerse gets much easier to use once you stop expecting it to win every job.

Use it when the shot needs to feel believable.

Product rotations, pours, splashes, drape, grounded lifestyle moments, presenter localization, and motion-driven brand character work all sit naturally in its lane.

It is also a strong choice when native audio matters and you want the output to come out closer to publish-ready in one go.

That is the zone it owns.

There are still plenty of cases where another model makes more sense:

-

If the brief is a cinematic 4K hero film, use a Veo-class model

-

If the job needs tighter multi-shot direction across a more complex sequence, use a Kling-class model

-

If the priority is more advanced world simulation or something closer to high-end synthetic filmmaking, use a Sora-class model

That does not weaken the PixVerse case. It strengthens it.

Serious brand teams do not force one model to solve everything. They use the one that fits the shot. PixVerse AI becomes much more useful when you treat it that way.

Three Brand Workflows Worth Stealing

The best way to judge a model like this is not by features. It is by what it lets a team do on a normal week.

1. Product still to launch teaser

Start with one strong product image. Generate a few short motion variations in PixVerse. Pick the one where the movement feels most believable, then add captions, brand colors, and a logo end card in the editor. That turns one static asset into multiple short-form launch creatives without setting up a full product shoot.

2. Ten markets with a single shoot

Record one presenter video once. Then use lipsync to localize it across markets while keeping the visual exactly the same. Same wardrobe, same lighting, same composition. Only the spoken version changes. For product updates, campaign announcements, and feature explainers, that is a much more scalable workflow than shooting every version separately.

3. Brand character with directed motion

If you have a brand mascot or recurring character, motion transfer lets you build movement into that system much more efficiently. Start with the character image, pair it with a driving clip that contains the gesture or body language you want, and generate a new branded asset from there. That is a practical way to build a motion library over time instead of treating every character animation as a one-off job.

These are the kinds of workflows where PixVerse stops being interesting and starts being useful.

Where PixVerse Fits Inside Invideo

The real problem with AI video is not just picking the right model. It is dealing with the mess that comes after:

-

One tool for generation

-

Another for voice

-

Another for sound

-

Another for editing

-

Another for captions

-

Another for exports

The output is only as efficient as the system around it, and most teams end up with too many disconnected pieces.

That is why PixVerse is more useful inside invideo than as a standalone experiment.

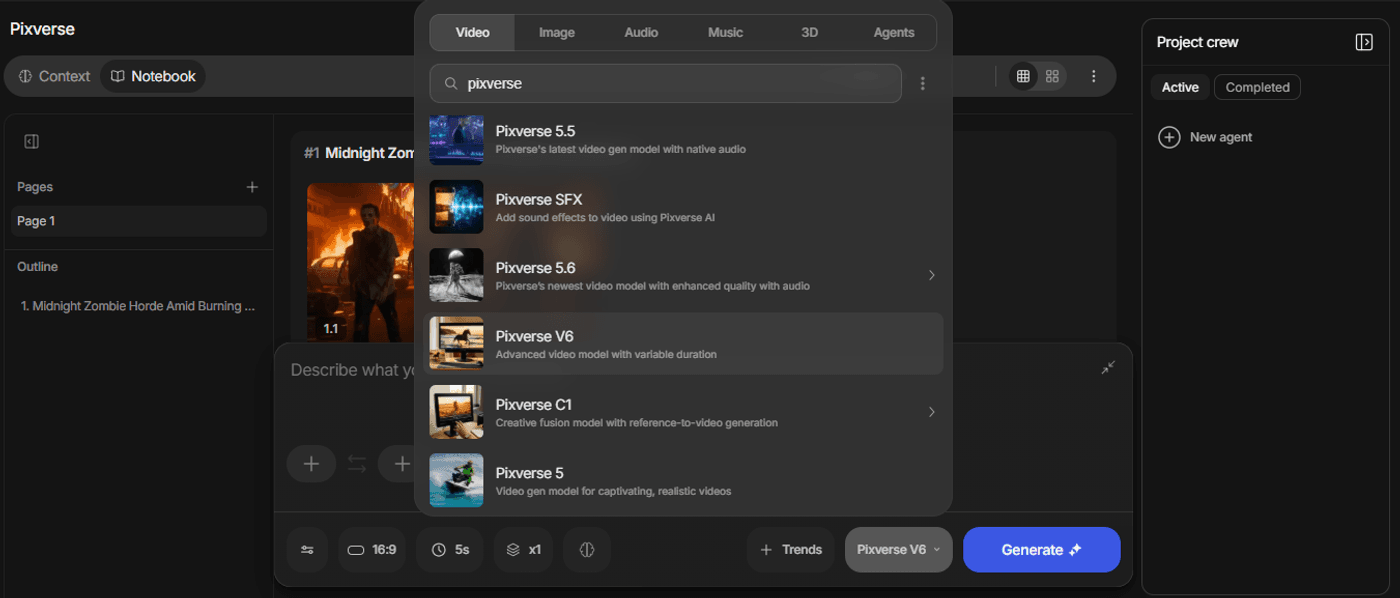

With invideo, PixVerse is part of the Generative Models library in Agents & Models.

It sits alongside more than 50+ other models and tools, including Veo 3.1, Kling 3.0, Sora 2 Pro and Seedance 2.0.

So if PixVerse is the right model for realistic motion and brand-ready visuals, you use it there. If another shot needs a different model, you switch without breaking the workflow.

The path is simple: open Agents & Models, go to Generative Models, select PixVerse V6, start a New Project, add your prompt or input asset, and generate.

From there, the clip lands in the same workspace for editing, captions, music, brand kit application, and platform-specific export.

That is the bigger advantage. PixVerse is stronger when it is part of a system, not sitting outside of one.

The real value of PixVerse for brands

Brand teams do not need AI video that wins attention for being strange. They need AI video that does its job.

That is what makes PixVerse worth using. Not because it is the loudest model in the category, but because it consistently lands the kind of motion that branded work depends on. Products feel more real. Lifestyle clips feel more usable. Presenter assets become easier to scale. Static visuals become motion without turning into obvious AI experiments.

That is a meaningful difference.

The teams that get the most out of PixVerse do not treat it like a one-prompt magic trick. They treat it like a production tool with a very clear role. Use it for the shots it owns. Use other models for the shots they own. Bring everything together in one workflow, apply your brand system, and ship.

FAQs

-

1.

What is PixVerse AI?

PixVerse AI is an AI video model designed to turn text prompts, still images, or both into short video clips. Its strongest use cases are product visuals, lifestyle scenes, presenter-led content, and branded motion assets that need to feel believable rather than overly stylized.

-

2.

What makes PixVerse AI useful for brands?

PixVerse is useful for brands because it handles the kinds of shots marketing teams need all the time: product movement, grounded lifestyle footage, lipsynced presenter videos, and motion-led content built from existing brand assets. The main advantage is not just speed. It is that the output feels closer to something a team could actually publish.

-

3.

Is PixVerse AI good for image to video AI workflows?

Yes. This is one of its most practical strengths. If you already have a strong product photo, campaign visual, or brand character, PixVerse can turn that still into motion while preserving the original composition and look. For brand teams, that makes image to video ai much more useful because it builds on assets they already own.

-

4.

Can PixVerse AI create AI product video content?

Yes. PixVerse works especially well for AI product video use cases where realism matters, such as product rotations, pours, splashes, drape, and close-up lifestyle scenes. It is a strong fit for brands that need short-form product motion content without setting up a full production shoot for every variation.

-

5.

Does PixVerse AI support lipsync and audio?

Yes. PixVerse supports native audio generation as well as lipsync. That means teams can generate video with sound already built in, and they can also adapt existing presenter videos for different languages or markets without reshooting the original asset.

-

6.

What is PixVerse motion transfer?

Motion transfer lets you use a driving video to guide the movement of a subject from a still image. In practice, that means you can take a brand character, mascot, or product visual and apply a specific gesture, body movement, or performance style to it. For marketers, it is a practical way to create more directed variations without rebuilding the asset from scratch.

-

7.

When should you use PixVerse instead of another AI video model?

Use PixVerse when the priority is believable, production-ready brand content. It is a strong fit for product visuals, lifestyle clips, localization, and motion-led brand assets. If the job calls for a 4K cinematic hero film or a more tightly directed multi-shot sequence, another model may be a better fit.