Quick Rundown

-

Use Seedance 2.0 to conquer multi-shot consistency. It excels at narrative sequences and high-end ads, ensuring every scene feels like part of a single, unified production.

-

Choose Kling AI 3.0 for photorealistic human motion at an accessible price. It powers motion-heavy projects, product campaigns, and human-centered storytelling.

-

Prioritize Veo 3 when you need native audio. It streamlines your workflow by generating sound and visuals simultaneously, slashing the time you spend on stitching and post-production.

-

Deploy Grok Imagine for rapid cinematic prototyping. It allows creators to test visual styles and creative directions instantly before they commit to a heavy-duty production pipeline.

-

Master the "control play" with Wan 2.7. It offers technical creators open-source flexibility and local deployment, allowing you to fine-tune specific models for your own characters and worlds.

-

If you want a single subscription that unlocks all these models in one place and in a single workflow, choose invideo as your primary platform.

Film Making has changed.

Bitcoin: Killing Satoshi, a $70 million feature starring Gal Gadot and Casey Affleck, replicated 200+ locations with AI that would’ve otherwise cost production $300 million.

Indie filmmaker Brad Tangonan completed a short called Murmuray using Google's Veo and Gemini tools and screened it at Soho House New York.

None of these required a studio, a location budget, or a crew of fifty.

What matters in an AI filmmaking tool

You do not need every tool to do everything under the sun.

You need to know where your workflow breaks. For most creators, the break points are pretty consistent.

The first is continuity. Can the tool hold a character, a world, and a visual language across multiple shots without forcing you to rebuild the project every time?

The second is control. Can you make a change once and have it ripple through the right parts of the sequence?

The third is execution quality. That includes motion realism, prompt adherence, camera logic, and whether audio has to be patched in later.

The fourth is workflow fit. Some tools are built to be used directly. Some are better inside a stack. Some are only worth it if you want local control and can handle the setup.

That is the frame to keep in your head. Not which tool looks best in a single clip.

The Best AI Filmmaking Tools in 2026

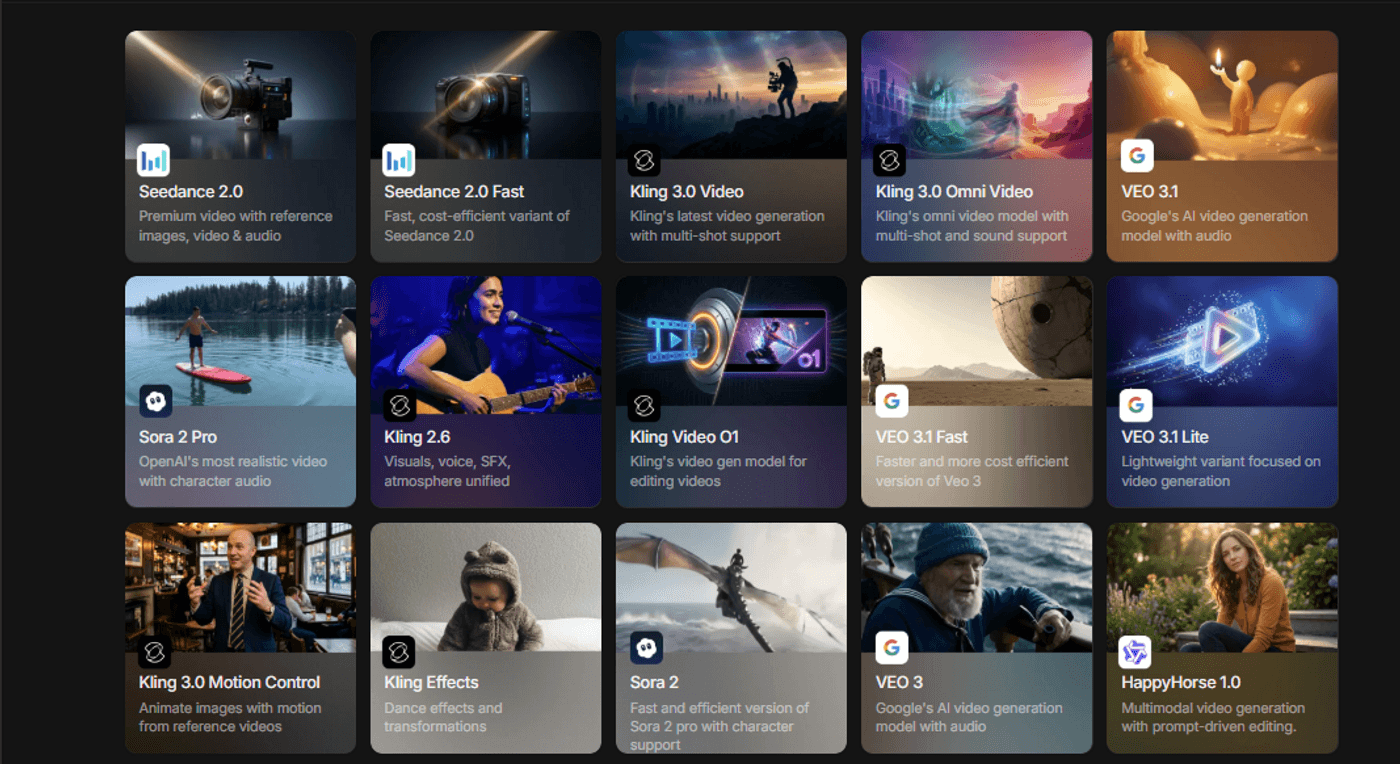

1. Seedance 2.0

Arguably, the number one tool in this space right now, if your problem is not project memory but shot-to-shot consistency, Seedance is one of the strongest tools in the category.

This is the tool people reach for when they are trying to make multiple shots feel like they belong to the same piece instead of the same prompt library.

That distinction matters.

Seedance is designed to reduce that problem by treating multi-shot generation as the default challenge, not as something you patch later.

Its input structure is a big part of why. You are not limited to a text prompt. You can anchor the generation with images, video clips, and audio references in one pass. That lets you give the model something closer to a production brief than a description.

For filmmakers, that means less guessing.

2. Kling 3.0

Kling is best when you care more about body dynamics, facial movement, action, or motion transfer. The Motion Control feature is what makes it especially useful in production experiments.

Instead of trying to describe movement from scratch, you can extract motion from a reference video and apply it to a new subject.

Additionally, for choreography, stylized action, and campaign work, Kling can remove a lot of iteration.

3. Google Veo 3.1

If native audio is non-negotiable, Veo becomes a serious contender fast.

This is not a cosmetic feature. It changes the structure of the workflow.

When dialogue, sound effects, and ambient sound arrive in the same generation, you remove an entire class of cleanup and stitching work. The value is not just that it looks good. The value is that one less layer has to be rebuilt afterward.

Veo is also one of the clearer options for developer and enterprise teams. Between API access, production stability, and integration into Google’s broader ecosystem, Veo makes the most sense when the tool is part of a larger system rather than a one-off creator playground.

4. Grok Imagine

Grok Imagine is one of the more interesting tools for fast cinematic prototyping.

Not because it replaces a full pipeline. Because it helps you get to a visual idea quickly, especially when you care about stylization and want a strong-looking output without building a whole sequence architecture around it.

Its Aurora engine takes an autoregressive approach rather than a standard diffusion flow, and the practical claim here is better prompt adherence and less drift.

With Grok Imagine, build concept sequences, and push short-form cinematic ideas before deciding which shots deserve a more durable workflow.

That is the right way to think about it.

Not as the universal answer. But as a fast way to pressure-test a look.

5. Wan 2.7

Wan matters for a different reason than the cloud tools.

It gives you control.

Not convenience. Not simplicity. Control.

If you want an open model with no usage caps, no API dependency, and the ability to run locally, Wan is one of the most important options in the category. That is why technical creators pay attention to it. The real advantage is not just that it is free. It is that you can fine-tune it on your own characters, your own world data, and your own pipeline.

For long-form work, that matters.

Closed systems are easier to start with, but they still limit how deeply you can shape the model around your production. Wan is more demanding, but it gives advanced users something cloud tools still struggle to offer: Deeper local control over consistency.

6. Happy Horse

Alibaba’s Taotian Future Life Lab developed Happy Horse under the leadership of Zhang Di. After designing Kling 1.0 and 2.0 at Kuaishou, Zhang rejoined Alibaba in late 2025 to spearhead this project.

Unlike tools that "glue" audio onto video as an afterthought, Happy Horse generates voice and lip movements simultaneously within the model. This native synchronization eliminates the jarring, immersion-breaking lag found in traditional AI video. As such, Happy Horse is good at:

-

Cinematic Fluidity: Delivers smooth, professional-grade camera movements.

-

Visual Anchoring: Maintains consistent character features and wardrobe details across cuts.

-

Narrative Cohesion: Executes multi-shot sequences that feel like a single, coherent production.

We have actually gone ahead and tested Happy Horse 1.0. You can see how it holds up against the top model in the market, Seedance 2.0.

One Tool To Rule Them All

If you are on the look out for a platform/tool that has all the aforementioned models and more, invideo is where you need to be.

With invideo, you define the character. You establish the world. You set the tone, all with a prompt and select a model of your choice.

Here you are directing, and not resetting over and over again.

What’s more, invideo’s Agent One is built to keep its memory. Characters, world details, and visual direction persist across the production, so the next instruction is interpreted in context instead of in isolation.

This flips the script.

Instead of treating generation as a chain of disconnected clips, it behaves more like a production layer. You can upload a script, treatment, or rough concept, and Agent One uses that context as the baseline for what follows. If you adjust a character, a sequence, or a visual direction, the change is not trapped inside a single prompt.

This is also where the agentic editing layer matters. A note like changing the lighting in one sequence is not just applied to one output. The AI can identify where that direction touches the broader scene set and execute it across connected shots.

It’s the difference between generating footage and managing a production.

Another practical advantage is that you do not need to talk to it like a prompt engineer. You can brief scenes in plain production language, and Agent One handles model choice, prompt construction, and direction logic under the hood. That matters!

From Generation to Production

Don’t get distracted by the "shiny object" of a single perfect clip. If you are building a career in this new landscape, focus on continuity and context.

If you want to move beyond disconnected generations and start actually directing a cohesive production, leverage invideo.

The "Elders" of the space have shown us what's possible, but the current generation of agentic tools is finally letting us do the work. The studio is now a prompt away, so go build your world.

Frequently Asked Questions

-

1.

What is the best AI filmmaking tool for beginners in 2026?

If by beginner you mean “I want usable results without learning an entire technical stack,” the easiest entry points are tools with natural-language workflows and generous free access. The better beginner question, though, is what you are trying to make. A short film, product ad, faceless YouTube video, and previz sequence all need different things.

-

2.

Can AI tools replace a traditional film crew in 2026?

No. And the serious productions using AI are not acting like that is the goal. What AI is replacing first is overhead: extra location complexity, concept iteration time, some categories of set extension, and parts of post. Direction, taste, performance, and judgment are still the load-bearing pieces.

-

3.

Which AI filmmaking tools are completely free?

Open-source options with local execution come closest to truly free, assuming you already own the hardware. Browser-based tools with free tiers are better described as limited-access tools than free production systems.

-

4.

What is the biggest problem in AI filmmaking right now?

Consistency. Not one-shot beauty. Not raw novelty. Consistency across scenes, characters, revisions, and workflow steps. That is still where most projects either become films or fall apart into disconnected clips.

-

5.

What is the best AI tool for native audio?

If native synchronized audio is central to the project, Veo remains one of the most important tools to evaluate because that capability is part of the clip generation itself.