Key Takeaways

-

Invideo brings multiple AI video generation models into one workflow, so you can switch between Seedance, WAN, Kling, and more without resetting context or managing separate tools.

-

Seedance leads in visual consistency and cinematic output, WAN dominates motion and physics, Kling handles structured storytelling, while Runway remains useful for rapid experimentation.

-

Choosing the wrong AI video generation tool shows up immediately in broken motion, identity drift, or unusable footage, which increases iteration time and cost.

-

High-quality AI video production now depends on evaluating models across continuity, motion behavior, prompt control, and output polish, not just generation speed.

-

Multi-model workflows have become the standard, where different models handle different parts of the same video instead of forcing one model to do everything.

-

Invideo solves model fragmentation by giving you a single workspace to access, compare, and combine multiple AI video generation models without breaking your workflow.

The 2025 movie, The Brutalist did not just spark conversation for its storytelling. Its editor openly spoke about using AI to refine actors’ Hungarian accents and help design architectural visuals. That moment marked a clear shift of AI videos stepping into serious, award-level production work.

That shift sets the tone for where AI video generation stands today. It has moved past experimentation. You now judge a model by whether it delivers footage you can actually use. The best AI video generation models in 2026 compete on control, motion accuracy, and how precisely they match your intent.

Some models deliver cinematic depth, others focus on physics and realism, while a few prioritize speed. Choosing the wrong AI video generation tool can lead to wasted credits and outputs that feel slightly off.

That is why many teams now use invideo to access multiple AI video generation models in one place and move faster with fewer compromises. It lets you test different models side by side, reuse assets, and keep your workflow consistent instead of resetting context every time. You spend less time switching tools and more time refining outputs that actually fit your brand.

Let’s look at the best AI video generation models in 2026 and how each one performs where it matters.

How to Judge an AI Video Generation Model

Before you compare features, you need a clear way to judge output quality. The gap between a hobby tool and a production-ready engine comes down to a few non-negotiables that define how usable your footage really is.

- Frame continuity: Watch how scenes hold together over time. Weak models distort backgrounds or shift geometry as the camera moves, while stronger ones keep every element steady from start to finish.

- Character consistency: Generate the same subject across different shots and angles. A reliable model preserves facial structure, proportions, and details so your character does not change between frames.

- Real-world behavior: Look at how motion behaves. Water, fabric, lighting, and shadows should follow natural cause and effect, not feel simulated or disconnected.

- Instruction accuracy: Test how well the model understands your direction. You should not rely on hacks or repeated prompts to get basic cinematic cues or scene composition right.

- Output quality: Check resolution and motion smoothness together. Clean 4K output with stable motion saves you hours in post and gives you footage that is ready to use.

2026 AI Video Model Comparison Table: A Snapshot

Here’s a quick snapshot of the best AI video generation models in 2026.

| Brand / Model | Key Features | Best For | Cost Tier |

|---|---|---|---|

| Seedance 2.0 | Multi-shot generation, strong visual consistency, cinematic camera control, image-to-video workflows | High-end cinematic content, product videos, brand storytelling | From $14 USD/month |

| Runway Gen-3 Alpha | Flexible prompting, quick iteration, experimental scene generation, basic VFX-style control | Creative exploration, prototyping, early-stage concepts | From $15 USD/month |

| WAN (2.6) | Advanced motion modeling, realistic physics, smooth frame transitions, high-res fast generation | Action-heavy scenes, realistic motion, simulations | From $29.90 USD/month |

| Kling 3.0 | Multi-shot sequencing, reference elements for consistency, strong prompt control, video editing modes | Narrative videos, ads, structured storytelling | From $9.8 USD/month |

| Sora 2 (V2) | Native audio generation, realistic environments, multi-shot continuity, style flexibility | Realistic scenes, narrative clips, immersive content |

From $39.90 USD/month |

|

Google VEO 3.1 |

4K output, native audio generation, strong physics + realism, reference image control, multi-aspect (16:9 / 9:16), clip extension + transitions |

High-quality ads, social content with audio, realistic storytelling, multi-platform video production |

Not publicly standardized (API-based / usage pricing) |

Ideal Use Cases and Limitations of Top AI Video Models

The top AI video generation models in 2026 each dominate a narrow lane. You need to understand where each model actually performs in production and where it breaks, before you commit your time and credits. Let’s look at the top five AI video models in 2026:

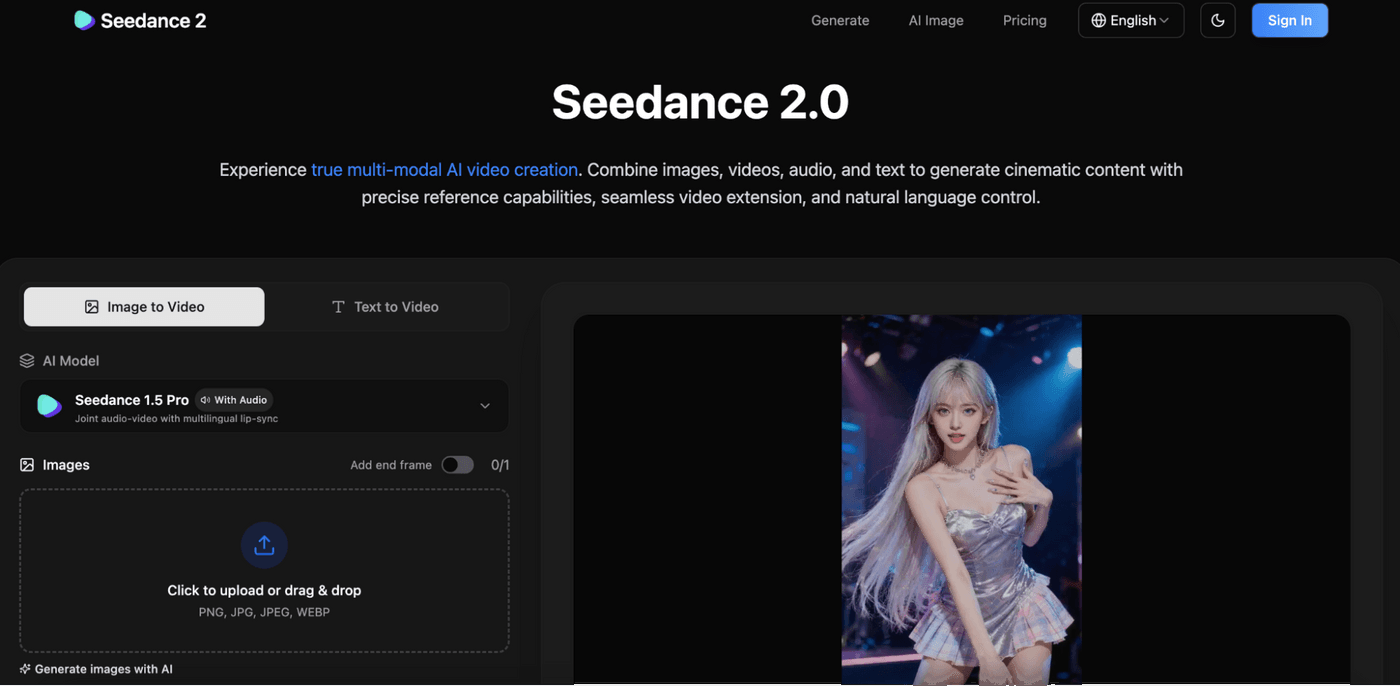

1. Seedance: The New Benchmark for Aesthetic Consistency

Seedance 2.0 handles motion better than most current video models. It makes your characters and environmental interactions (debris, collisions, movement through space) look physically believable instead of floaty.

It also supports multi-scene generation from a single reference. You can keep the same character, shift camera angles, and generate cuts that stay visually aligned across shots.

You can use it for brand films, product visuals, or storytelling content where look and mood matter more than rapid iteration. It gives you polished clips fast, with minimal manual editing.

What makes Seedance stand out

Seedance focuses on visual continuity across shots. It builds scenes automatically, applies motion intelligently, and maintains a consistent style through the entire sequence.

The model handles multi-input workflows well, which means you can combine text, images, and references without breaking the visual tone. It reduces the effort needed to storyboard and assemble clips into something that feels intentional.

Limitations of Seedance

A lot of users have flagged problems like:

- Resolution is limited (around 720p), and it struggles on larger displays

- Fine detail breaks down—faces, crowds, and backgrounds lose clarity

- Lip sync works at a basic level but lacks subtle expression

- Visual consistency starts slipping once sequences run longer

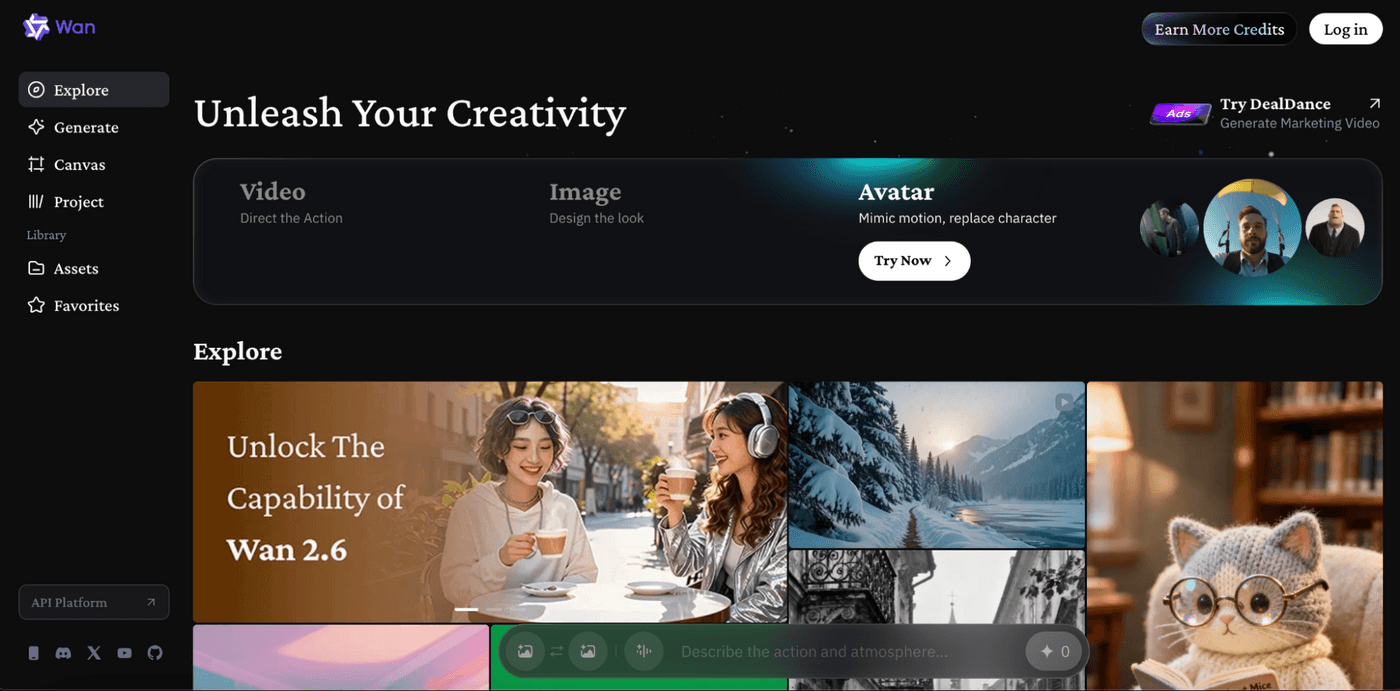

2. WAN: The Physics Powerhouse

WAN 2.5 follows prompts closely when the shot is clearly defined. Framing, subject placement, and basic actions usually match what you write, especially in simple setups like conversations or single-character scenes. It responds well to camera language (angle, framing, movement), and outputs improve when prompts read like shot directions.

It can generate 1080p video at 24fps with native audio, and it’s open source, which makes it easier to run locally or plug into custom workflows.

What makes WAN stand out

WAN focuses heavily on motion intelligence. It uses advanced architectures to map how objects and bodies move through space, which results in smoother transitions and stronger frame coherence.

You get natural motion trajectories, better coordination between elements, and fewer distortions during movement. It also processes prompts quickly and supports high-resolution outputs, which makes it useful for rapid yet realistic generation.

Limitations of WAN

While WAN excels at motion realism, spatial accuracy, and smooth scene transitions, users often point out a few practical gaps, like:

- Motion degrades when multiple elements interact (crowds, water, overlapping movement)

- Best results require limiting motion to one source (either camera or subject, not both)

- Facial detail smooths out during expression changes, losing realism

- Scenes with multiple subjects or layers lose clarity and focus

3. Runway (Gen-3 Alpha): The Creative Sandbox

Runway Gen-3 Alpha fits early-stage ideation, not final production. It works when you want to test visual directions, explore styles, or generate rough concepts for marketing videos before committing to a polished output.

You can use it to prototype scenes, experiment with prompts, or build mood boards, but not when you need reliable, client-ready footage.

What makes Runway stand out

Runway gives you flexibility. It lets you explore a wide range of prompts, styles, and compositions quickly without overthinking structure.

The tool feels open-ended, which makes it useful for creative exploration and rapid iteration. You can test ideas fast, push visual boundaries, and discover directions that you can later refine using stronger, production-ready models.

Limitations of Runway (Gen Alpha)

While Runway offers creative freedom and fast experimentation, users often point out consistent gaps:

- Struggles to accurately follow prompts, even when detailed

- Outputs often feel generic or loosely assembled

- Motion breaks down in complex or dynamic scenes

- Results rarely meet commercial or production standards

- High iteration cost with inconsistent payoff

4. Kling: The Logical Storyteller

Kling fits structured storytelling, where continuity matters across shots. You use it when your video needs to hold narrative flow, not just visual appeal.

Product videos, brand stories, and multi-shot sequences benefit the most because Kling keeps characters, props, and scenes aligned from one frame to the next without constant resets.

What makes Kling stand out

Kling handles sequence logic better than most models. Its multi-shot generation lets you build scenes that connect naturally instead of stitching isolated clips.

The reference element system locks characters and objects across shots, which makes brand storytelling far more reliable. It also responds well to directed prompts, so you can control timing, camera movement, and scene transitions with intent rather than guesswork.

Limitations of Kling

While Kling delivers strong continuity and control, users often point out trade-offs:

- High-quality generations consume credits quickly

- Rendering times can slow down iteration cycles

- Struggles with abstract or heavily stylized visuals

- Not always as strong in cinematic texture compared to niche models

- Requires planning upfront, which can limit fast experimentation

5. Sora 2 (V2): Extreme Realism

Sora 2 fits scenarios where realism needs to carry the entire video. You use it when you want scenes that feel filmed, not generated. It works well for narrative clips, simulated environments, or moments where subtle human behavior and environmental detail need to come through without heavy stylization.

What makes Sora stand out

Sora 2 pushes realism beyond visuals by combining motion, environment, and native audio in one pass. It can generate dialogue, ambient sound, and effects alongside the video, which changes how you build scenes.

The model also handles complex prompts across multiple shots while keeping faces, lighting, and tone consistent, which makes longer sequences feel more cohesive.

Limitations of Sora

While Sora 2 delivers strong realism and integrated audio, users often highlight structural gaps:

- Spatial continuity can break across cuts, even when audio flows smoothly

- Motion still slips into unnatural behavior in edge frames

- Scene geography and camera logic can feel inconsistent

- Access remains limited and slows real-world adoption

- Not fully reliable for precise editing or controlled storytelling

6. Google VEO 3.1

Google Veo 3.1 is built for teams that want production-grade video with tight control over visuals and sound. It takes text or image prompts and turns them into detailed clips with synced audio, strong physics, and clean prompt alignment.

You can use it for ads, social content, or narrative pieces where realism and timing matter. It handles both landscape and vertical formats, making it easy to produce for multiple platforms without reworking everything.

What makes Veo 3.1 stand out

Veo 3.1 focuses on realism and control. It renders lighting, motion, and physical interactions with a level of detail that holds up in longer sequences. Native audio generation is a key difference here—it builds sound directly into the scene instead of treating it as an afterthought.

The model also gives you more control over composition. Reference images help guide character appearance and style, and the system maintains that direction across different shots and aspect ratios. Clip extension and transition tools make it easier to build sequences that feel connected rather than stitched together.

There’s also a faster variant, Veo 3 Fast, which trades a bit of depth for speed and cost efficiency. That makes it useful for quick-turn content like ads or social posts.

Limitations of Veo 3.1

Some users have pointed out a few rough edges:

- Audio can feel loosely synced in more complex scenes

- Longer clips may still show small inconsistencies in motion or detail

- High-quality settings can be slow and expensive to run

- Strong prompt control sometimes requires iteration to get precise results

Choosing the Right Video Generation Model in Seconds

You don’t need another long comparison to decide. You need a fast way to match your goal to the right video generation model before you waste time or credits. Here’s a quick way to choose based on what your output actually demands:

| Your Priority | Best Model | What You Actually Get |

|---|---|---|

| High-fashion visuals, product consistency | Seedance | Strong visual cohesion, cinematic styling, consistent subjects across shots |

| Complex motion, realistic physics | WAN | Natural movement, accurate motion trajectories, stable frame transitions |

| Camera control, VFX-style experimentation | Runway | Flexible prompting, rapid iteration, creative scene exploration |

| Story flow, multi-shot continuity | Kling | Structured sequences, consistent elements, better narrative control |

Most production workflows do not rely on a single model anymore. A single project might require Seedance for hero shots, WAN for motion-heavy scenes, and Kling for stitching narrative sequences. Managing that across separate tools slows you down and fragments your workflow.

This is exactly the gap invideo solves for. It changes how you approach AI video generation at a workflow level. You no longer treat models as separate tools that require setup, context rebuilding, and repeated iteration. Instead, you work inside a single system where multiple AI video generation models are already integrated and ready to use. You can switch between Seedance, WAN, Kling, or Sora 2 depending on the shot you need, without breaking your sequence or starting over. That continuity matters when you are building multi-shot videos that demand consistency across scenes.

It also extends beyond access. Invideo gives you a production layer on top of these models. You can generate longer-form videos instead of isolated clips, edit scenes using text without regenerating everything, and reuse assets across different outputs. The platform supports a wide range of models, stock libraries, and audio tools, which means your entire pipeline stays in one place. You spend less time managing tools and more time shaping the final output to match your brand.

The Power of Multi-Model Workflows

No single model covers the full scope of production in 2026. Each one handles a specific layer of the process, and strong outputs come from combining them with clear intent. You use Seedance to define the visual tone in your hero shots, WAN to execute sequences where motion and realism matter, and Kling to maintain continuity across supporting scenes.

This approach changes how you create videos. Instead of forcing one model to do everything, you assign roles based on strengths and assemble the final output step by step. That shift leads to better consistency, fewer regeneration cycles, and footage that holds up across the entire timeline.

Check out invideo today!

FAQs

-

1.

Which model is best for YouTube automation?

Kling works best for YouTube automation because it handles multi-shot sequences and narrative flow without breaking continuity. Many creators use invideo to combine Kling with other models for faster, scalable content production.

-

2.

Does Seedance handle text-to-video better than Runway?

Seedance delivers more structured and visually consistent outputs from text prompts, especially for cinematic scenes. Runway works better for experimentation, but creators often refine Seedance outputs inside invideo for production-ready videos.

-

3.

Is WAN better for realistic human faces?

WAN performs well with motion and body coordination, but it is not specifically optimized for facial consistency across shots. You get better results when you combine WAN with other models through invideo to balance motion and identity.

-

4.

Can I use Seedance for free on invideo?

Invideo offers access to multiple models, including Seedance, through its plans and credit system. You can explore features with trial access, but full usage depends on your subscription and credits.

-

5.

Which model is best for YouTube automation?

Kling remains the strongest option for building structured, repeatable video formats at scale. Invideo makes this easier by letting you integrate Kling into a broader multi-model workflow.

-

6.

Does Seedance handle text-to-video better than Runway?

Seedance interprets prompts with more visual consistency and cinematic intent. Runway is useful for testing ideas, but most creators move to Seedance via invideo when they need reliable outputs.

-

7.

Which AI video model is the fastest in 2026?

WAN and Runway are among the fastest for generating quick iterations, especially with simpler prompts. invideo helps you balance speed and quality by letting you switch models based on the task.