TL;DR: WAN 2.5 Review

-

WAN 2.5 enables high-fidelity text-to-video and image-to-video creation with native audio sync.

-

It understands cinematic camera language, making shot control predictable and repeatable.

-

Compared to WAN 2.2, Veo 3, and Sora 2, it prioritizes short, usable clips over scale.

Ever felt like your video projects are stuck in post-production purgatory?

You sketch out that perfect dolly zoom, line up voiceover, and sync audio for hours, only to realize the pacing feels off and the budget is already stretched. Filmmakers and editors know this grind all too well.

Alibaba’s WAN 2.5 flips that script. Imagine typing “crane shot over a neon Tokyo street with lip-synced narration” and getting a polished 1080p clip with music, ambient sound, and controlled motion in under a minute. No patchwork timelines. No Frankensteined edits. Yes, that’s possible with platforms like invideo that make it easy to access WAN 2.5 and move straight from idea to output.

Curious what that means in practice? This WAN 2.5 AI video editor review breaks it down with real workflows, clear feature analysis, WAN 2.5 vs 2.2 upgrades, and a grounded Sora 2 vs Veo 3 vs WAN 2.5 comparison so you can choose the right model for your next project.

WAN 2.5 Features

WAN 2.5 AI packs powerful video capabilities into one accessible model. Its core features include:

1. Dual Video Generation Modes

Whether you want to generate AI images or create videos using prompts, WAN 2.5 can do both.. With text-to-video, you can build entire scenes from scratch, including characters, environments, and motion, using only text prompts.

On the other hand, the image-to-video option lets you animate a single reference image while preserving its visual structure.

2. Native Audio and Video Sync

WAN 2.5 can produce background music, ambient sounds, and spoken dialogues aligned with on-screen action. The model also automatically applies basic lip-sync if prompts include speech.

This ensures timing stays consistent throughout the clip, making iterations faster when refining dialogue or pacing.

3. Cinematic Prompt Understanding

WAN 2.5 responds with insane accuracy to typical camera language used in film and video production. Prompts that include terms like dolly movement, crane shots, parallax motion, or controlled zooms result in more predictable shot composition than vibe-based descriptions alone.

You can also iterate using instruction-style updates, adjusting camera movement, framing, or pacing without rewriting the entire prompt from scratch.

4. Improved Motion and Visual Consistency

Compared to earlier versions, WAN 2.5 delivers smoother motion and stronger frame-to-frame stability. Characters maintain form, objects stay anchored, and environments remain visually coherent throughout the clip.

The model also handles in-frame text and structured graphic elements more reliably, which is important for commercial ads, UI-focused videos, and explainer-style content.

5. Multi-Language Support

In WAN 2.5, you can enter prompts in many languages. It supports English, Chinese, Spanish, French, among others, and interprets them directly during generation. This also enables dialogue and lip-synced speech in different languages, making it easier to produce localized video content for global audiences.

WAN 2.5 vs. WAN 2.2 vs. Veo 3.1 vs. Sora 2

The current generation of AI video models reflects very different priorities. Alibaba’s WAN models focus on short-form video generation with native audio and predictable outputs.

Google’s Veo 3 emphasizes cinematic quality and realism but remains tightly gated. OpenAI’s Sora 2 pushes long-form, world-consistent video generation, prioritizing scale and visual coherence over granular control.

The table below breaks down how WAN 2.5, WAN 2.2, Veo 3, and Sora 2 compare across core capabilities, maturity, and real-world usability:

How WAN 2.5 Transforms Your Workflow

WAN 2.5 streamlines video creation across multiple roles. Whether you are a filmmaker, marketer, social creator, or business owner, you can quickly visualize concepts, test ideas, and produce content.

Check out the table below to see exactly how WAN 2.5 supports each role, what it excels at, and the key limitations to keep in mind:

How to Use WAN 2.5 on invideo

WAN 2.5 AI video generation model is available on invideo. Here’s how you can access it to whip up cinematic clips in minutes:

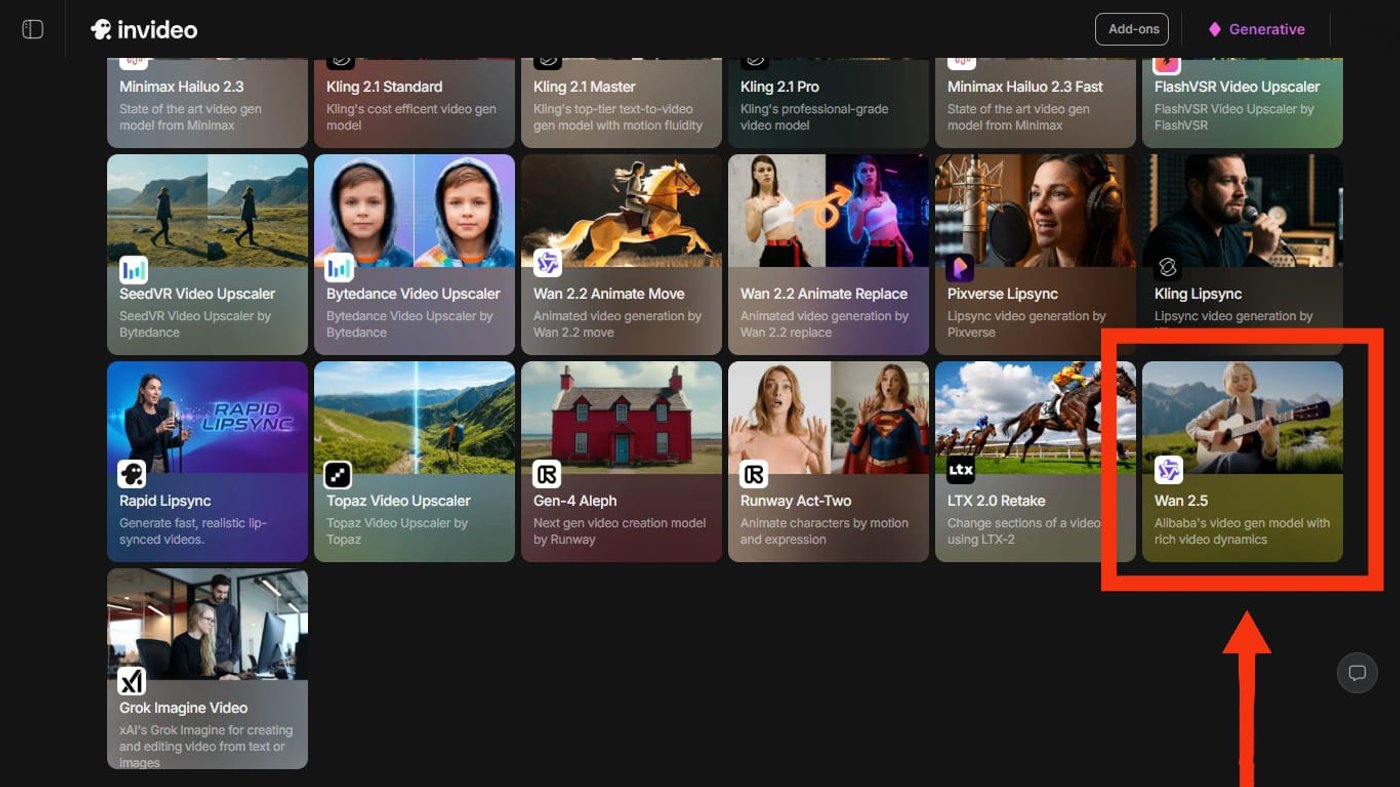

1. Select WAN 2.5 from “Agents & models”

Once you log in to invideo, scroll down to the “Agents & models” section on your home screen. Select WAN 2.5 from the list and start a new project.

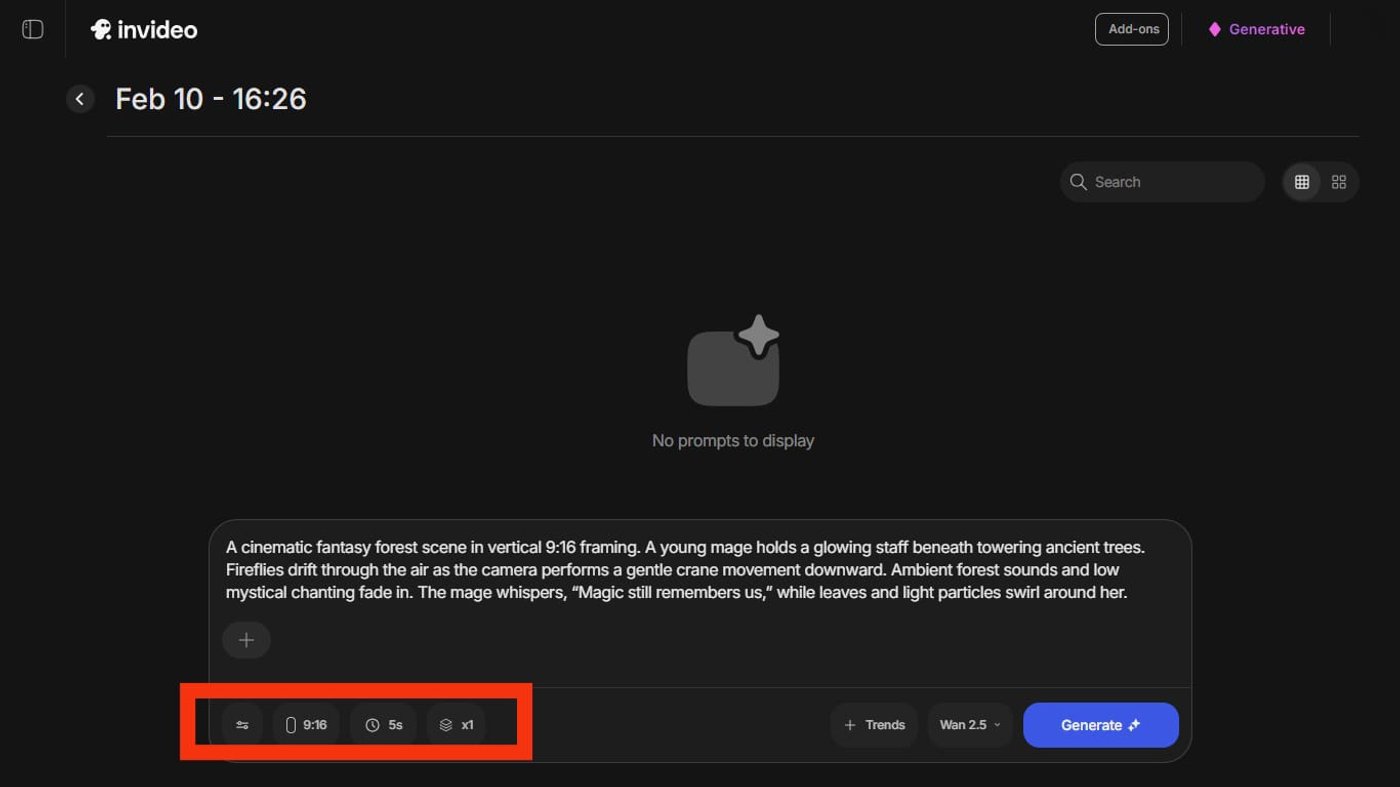

2. Enter Your Prompt

WAN 2.5 lets you generate videos through both text prompts and images.

This means, you can either upload a reference image and direct its animation through a supporting prompt or start entirely from scratch (like we did here!) with a well-defined prompt that includes your subject, storyline, dialogues, camera movements, etc.

For instance, here’s a prompt we used to generate an anime-style cinematic clip:

Prompt: A cinematic fantasy forest scene in vertical 9:16 framing. A young mage holds a glowing staff beneath towering ancient trees. Fireflies drift through the air as the camera performs a gentle crane movement downward. Ambient forest sounds and low mystical chanting fade in. The mage whispers, "Magic still remembers us," while leaves and light particles swirl around her.

Once done, set the resolution (480p, 720p, or 1080p), the duration (5 seconds or 10 seconds), and the framing of your output (if it’s not explicitly mentioned in your prompt already), and hit “Generate.”

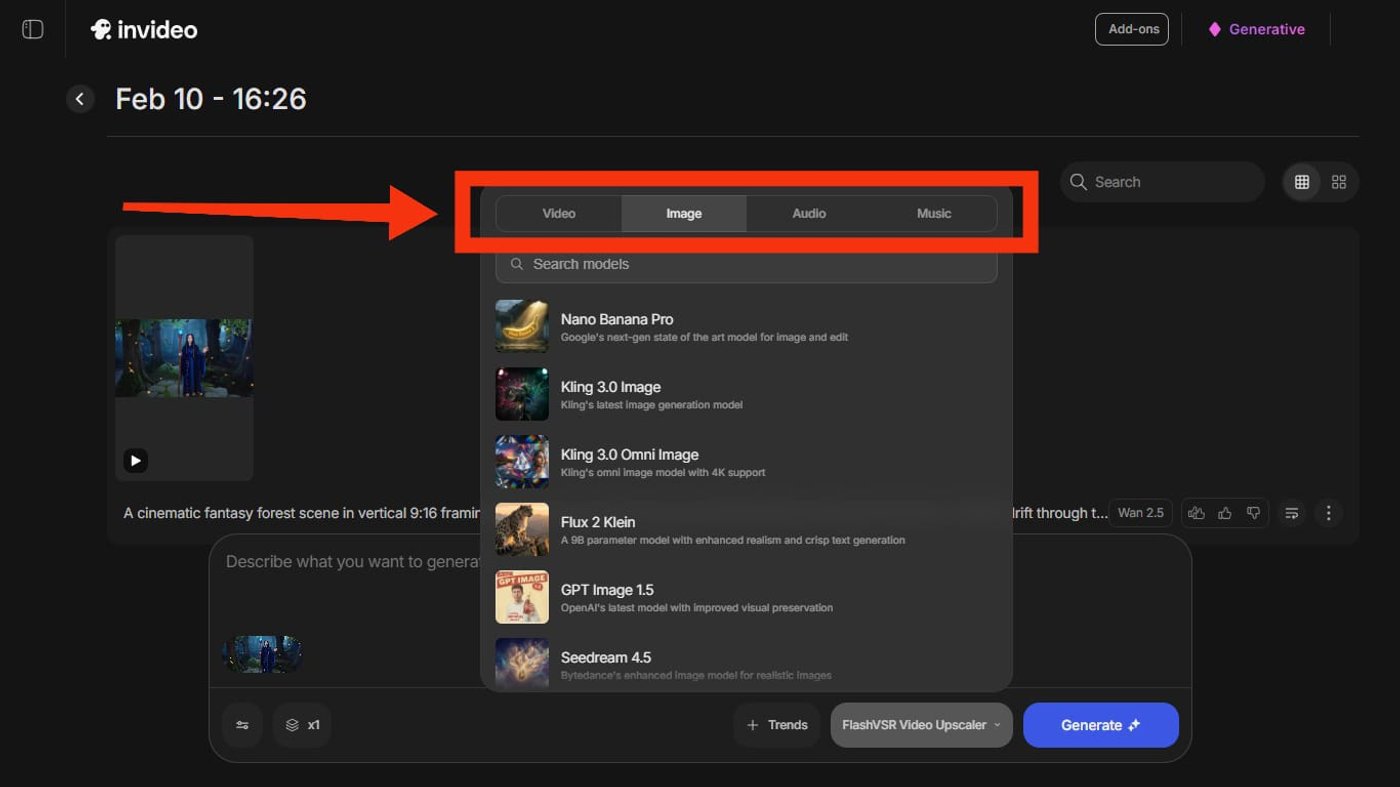

3. Refine the Elements

Within a few minutes, your video is ready. And if something feels off, you do not have to start from scratch.

With invideo, you can easily upgrade the animation, visuals, audio, or background music. Just pick a different model, hit Generate, and let the video improve while everything else stays the same.

Curious to see the clip our prompt generated? Check it out:

Turn Text and Images into Studio-Ready Videos with WAN 2.5 on invideo

With WAN 2.5 AI video generation, you can easily turn ideas into realistic cinematic stories with captions, voiceovers, music, and perfectly timed pacing. Start with a workflow, then build a library of prompts to make the process faster and more consistent. Once you have your system in place, producing high-quality videos becomes effortless and repeatable.

Ready to see your concepts come alive with WAN 2.5? Sign up on invideo and generate your next standout video today.

Frequently Asked Questions (FAQs):

1. What is WAN 2.5, and what can it generate?

WAN 2.5 is a generative AI model created by Alibaba that lets you generate high-quality, cinematic AI video clips with synchronized audio. It supports both text-to-video and image-to-video generation, making it suitable for creators of all kinds.

2. Is WAN 2.5 image-to-video better than text-to-video?

No, WAN image-to-video is not inherently better than text-to-video. Both offer different advantages. Image-to-video provides stronger visual consistency, while text-to-video is more flexible for ideation when no visual starting point exists. The better option depends on whether your priority is control or creative exploration.

3. How long can WAN 2.5 clips be?

WAN 2.5 is designed to generate short video clips with native audio. The maximum length of these clips can be 10 seconds. However, on invideo, you can generate videos of any length with WAN 2.5.

4. How to write prompts for WAN 2.5?

Start with the subject and action to anchor the main character or object in motion. Follow with style and camera movement for precise cinematic control, like a slow zoom or tracking shot. Layer in mood and lighting next to shape the tone, from warm golden hour glow to moody blue shadows. Finish with audio cues to sync narration, music, or lip-sync perfectly.

5. How to access WAN 2.5?

You can access WAN 2.5 directly inside invideo. Go to “Agents & models,” and select WAN 2.5. Enter your text prompt or upload a reference image, generate the clip, and then extend or edit it using invideo’s editor.

6. How is WAN 2.5 different from WAN 2.2?

WAN 2.5 improves on WAN 2.2 by offering better motion realism, stronger prompt understanding, and more consistent visual output across frames. It is more reliable for controlled video generation and produces higher-quality results with fewer visual artifacts than earlier versions.

7. How is WAN 2.5 different from WAN 2.6?

WAN 2.6 builds on WAN 2.5 with improved output stability, better prompt understanding, and stronger scene continuity across frames. It also introduces more advanced generation capabilities and supports more complex scenes. WAN 2.5 remains suitable for simpler workflows, while WAN 2.6 is better suited for more advanced video generation use cases.