Key Takeaways

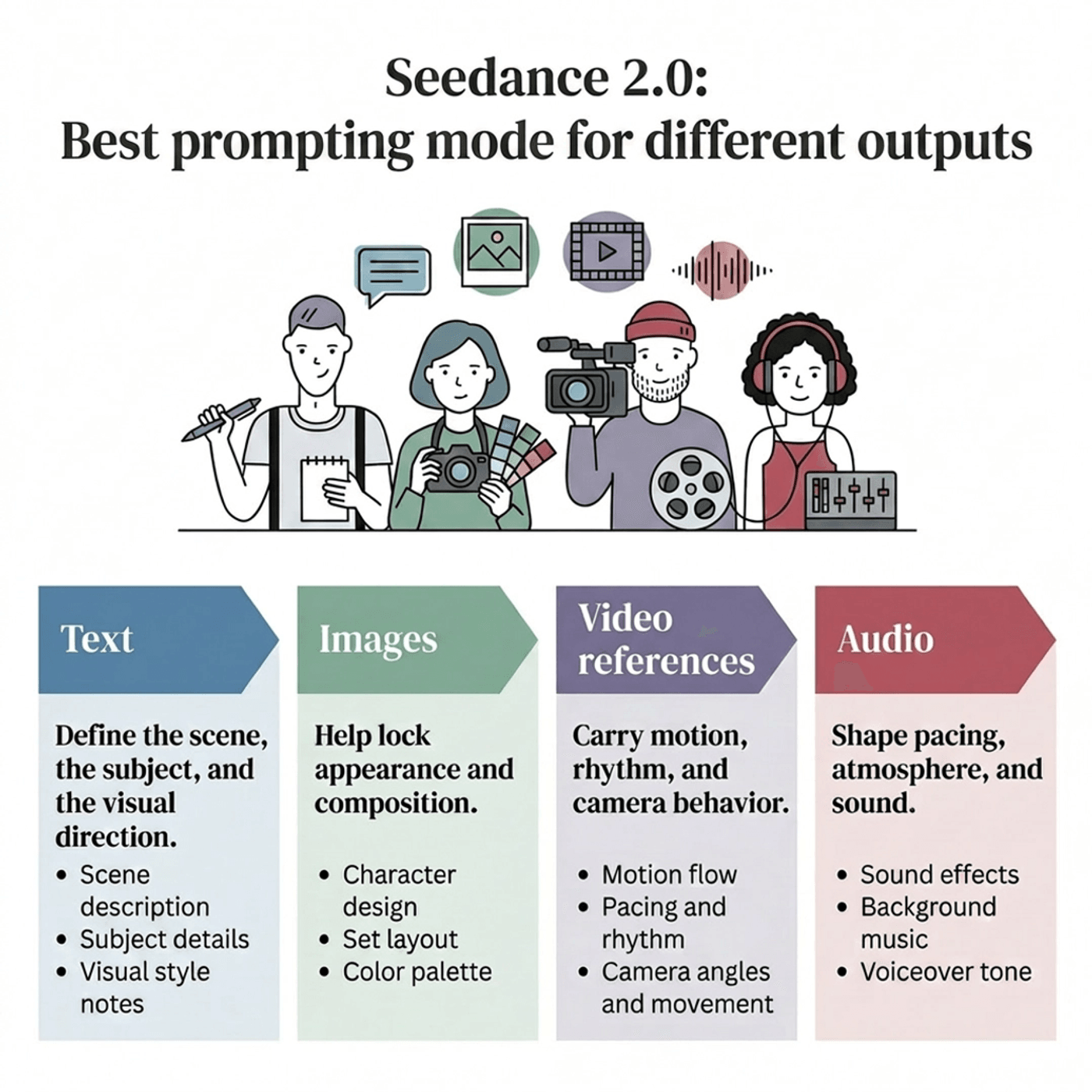

1. Seedance 2.0 works best by splitting control across text, images, video references, and audio.

2. The simplest way to prompt Seedance 2.0 is this:

-

-

Use text for building environments,

-

Use reference video to capture motion

-

3. In a good Seedance 2.0 prompt, text clearly defines the world, images lock the identity, video guides movement, and audio shapes rhythm & sound.

4. Seedance 2.0 prompting mistakes to avoid:

-

-

Too many style adjectives

-

Unclear reference roles

-

Low-quality source clips

-

5. If you want more consistent Seedance 2.0 AI video generation, start with short tests, and keep references clean.

Most people who try Seedance 2.0 for the first time make the same mistake. They treat it like a text-only video model.

They write a dense paragraph full of style words, dramatic adjectives, and camera terms, then expect the model to figure out the timing, pacing, movement, and visual consistency on its own. Sometimes that works. More often, it doesn’t.

Seedance 2.0 is strongest when you separate responsibilities clearly:

-

Text should define the scene, the subject, and the visual direction

-

Images should help lock appearance and composition

-

Video references should carry motion, rhythm, and camera behavior

-

Audio should shape pacing, atmosphere, and sound

Once you understand that, Seedance 2.0 AI video generation gets so much better. You stop prompting like a gambler and start directing like an editor.

This guide is built around that idea. If you want to know how to use Seedance 2.0 in a way that actually improves output quality, this is the framework that matters.

What Makes Seedance 2.0 Different?

At a practical level, Seedance 2.0 is a multimodal video model. That means it can work from more than just text. You can combine written prompts with images, video references, and audio references in the same prompt window.

This should instantly change how you think about prompting. Seedance 2.0 can accept up to:

-

9 image references

-

3 video references

-

3 audio references

Up to 12 files in total.

In older workflows, you had to describe almost everything in words and hope the model interpreted your prompt correctly.

If you wanted a certain camera move, you described it. If you wanted a specific rhythm, you described that too. If you wanted the subject to look consistent across frames, you had to keep rewriting the same descriptors and hope the model held onto them.

Seedance 2.0 gives you a better option. Instead of describing everything, you can show the model what should stay fixed and what should move. You can anchor a character with an image. You can borrow camera behavior from a short reference clip. You can use audio to push rhythm and sound cues.

This makes your prompting a lot more specific.

The shift is simple but important. Better results come less from writing longer prompts and more from assigning each input a clear job.

The Mental Model That Makes Seedance 2.0 Easier To Use

If you remember one thing from this guide, make it this:

Text is best for spatial decisions. Reference video is best for temporal decisions.

Spatial decisions are about what the world looks like.

That includes the subject, wardrobe, product appearance, location, lighting, mood, color treatment, and overall style.

Text is still very good at these things. If you want a rainy neon street, a minimalist studio, a warm documentary look, or a polished product scene, text can establish that clearly.

Temporal decisions are about what happens over time. That includes timing, rhythm, gesture cadence, camera motion, beat sync, and the exact shape of movement. This is where text often starts to wobble. You can write “slow push-in” or “subtle head turn on the final beat,” but those instructions are still being interpreted. A reference clip does not describe the move. It contains it.

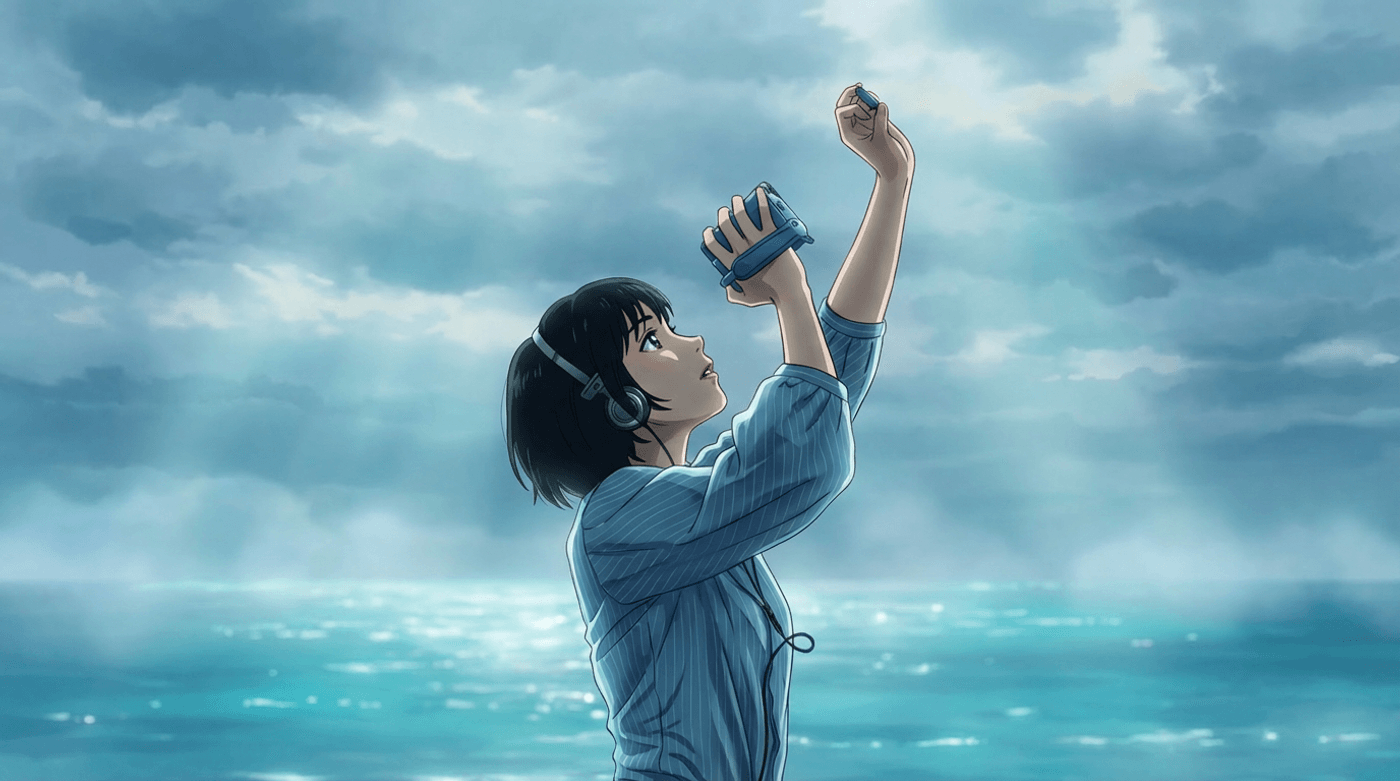

For example, this is the reference video we used:

This is the output:

That is why many weak Seedance 2.0 ai video outputs are not really prompt-writing failures. They are control failures. The user is asking text to solve a motion problem.

A better working rule is this. Use text to define the world. Use images to lock identity and look. Use reference video to lock movement and timing. Use audio when rhythm or sound matters.

Once you approach prompting that way, the model becomes much easier to steer.

Choose The Right Workflow Before You Write The Prompt

A lot of prompting problems start before the first sentence is even written. The user is in the wrong thought process.

If you are still exploring an idea, text-to-video is usually the best place to start.

It is faster for mood tests, concept exploration, and loose visual direction. If you are trying to find the right tone for a product scene, a travel moment, or an abstract cinematic idea, text-only prompting gives the model room to explore.

If you already know what the scene should look like and want to preserve a starting image, image-to-video is the better option. This is especially useful when the character, product, or composition matters and you want motion without losing the visual anchor.

Reference image

Output

If timing, choreography, gesture rhythm, or camera movement matter, use reference-to-video. This is where Seedance 2.0 becomes much more controllable. A clean reference clip can do more for motion quality than another fifty words ever will.

A practical workflow usually looks like this. Start with text when you are finding the idea. Move to image-first prompting when identity and composition need to hold. Switch to reference-driven prompting when motion starts to matter more than experimentation.

The Prompt Structure That Works Best

One thing to note here is how Seedance 2.0 reads prompts. The first 20 to 30 words carry the most weight.

The model uses the opening of your prompt to lock in the subject and the core action before it processes the rest. If your subject is buried in the middle of a long descriptive paragraph, the model is more likely to drift, hallucinate new characters in multi-shot sequences, or lose identity between cuts.

The practical rule is simple. Lead with who or what is in the frame, then what they are doing. Save the style, lighting, and atmosphere descriptors for after the subject is anchored.

Beyond this, you do not need a complicated formula to write a good Seedance prompt, but you do need structure.

A reliable pattern is this:

| Prompt Element | What It Should Do |

|---|---|

| Subject | Define who or what is in frame |

| Action | Describe the main movement or event |

| Scene | Establish the environment |

| Camera | Clarify shot size, angle, and movement |

| Style | Set the visual treatment |

| Audio | Direct sound, music, or dialogue if needed |

| Constraints | Reinforce quality and consistency goals |

Here is what that looks like in practice.

A weak prompt might say:

A cool cinematic ad for a perfume bottle, dramatic, stylish, premium, beautiful lighting. Wide camera, realistic.

That sounds descriptive, but it gives the model very little usable direction.

A stronger version would say:

A clear glass perfume bottle sits on a black stone pedestal in a dark studio. Condensation rolls slowly down the glass as a single drop falls onto the surface below. Medium close-up with a slow circular dolly move. Soft side lighting, high contrast reflections, luxury beauty-ad look. Subtle room tone and one sharp glass tap. Stable product shape, clean label detail, natural motion.

The second prompt works better because each part has a job.

-

The subject is clear

-

The action is simple

-

The scene is grounded

-

The camera move is explicit

-

The style is compact

-

The audio is intentional

The constraints reinforce what should stay stable

That is the core of how to use Seedance 2.0 well. Clarity beats intensity.

Every Input Needs a Job

This is where many multimodal prompts break.

People upload several images, a video clip, and an audio file, then write a vague prompt that loosely mentions them. The model then has to guess what each asset is supposed to control. That usually leads to blending, drift, or dropped references.

The better approach is to decide in advance what each input is responsible for.

Images are best when you want to lock subject identity, wardrobe, product appearance, environment design, or the opening frame. A still image is also useful when you want to guide style without importing motion from another clip.

Video references are best for camera motion, gesture timing, movement rhythm, choreography, and pacing. If the exact feel of the motion matters, this should come from video, not from text alone.

Audio should do one of three things. It should establish mood, establish rhythm, or establish specific sound cues. If it is not doing one of those jobs, it may not need to be there.

The simplest multimodal prompts often outperform the most crowded ones. One strong subject image, one clean motion clip, and one focused prompt can be much more effective than a pile of loosely related assets.

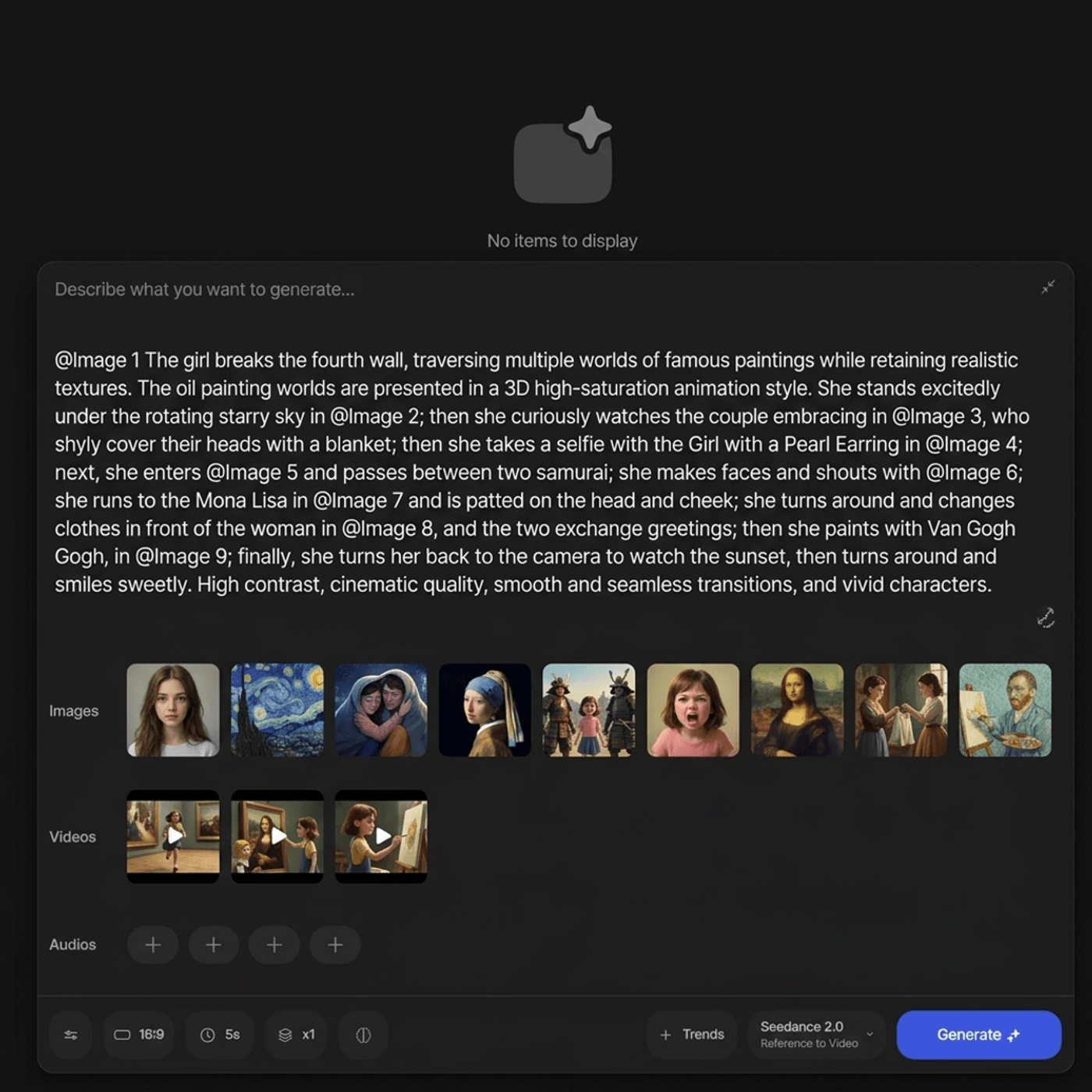

The @ Mention System Is Where Real Control Starts

When you use uploaded assets, Seedance 2.0 works better when you explicitly tell it what each file is for.

Do not just mention an image or clip. Assign it a role with an ‘@’.

Example:

@image1 Reference character

@image2 Background reference

Here is a simple reference table for the kinds of roles that matter most.

| Use case | What to assign | What to clarify in the prompt |

|---|---|---|

| Opening image | First frame image | Use it as the starting visual anchor |

| Character identity | Reference image | Use it for appearance only |

| Product consistency | Product image | Keep shape, material, and label stable |

| Camera motion | Video clip | Follow the movement or pacing |

| Choreography | Video clip | Reuse the motion pattern |

| Background music | Audio file | Use it for rhythm or mood |

| Clip extension | Existing video | Continue the scene for a set duration |

The important part is not the symbol itself. The important part is the instruction.

If you say an image is for appearance only, the model has a better chance of keeping the subject while ignoring the image’s background. If you say a video is for camera movement, the model is less likely to copy irrelevant visual details from that clip.

The more references you use, the more explicit you need to be.

When Text-Only Prompting Still Works Best

All of this does not mean Seedance 2.0 needs references for everything. It doesn’t.

Text-only prompting is still a great choice when you are exploring a visual concept, testing a mood, or generating something more atmospheric than exact.

It is especially useful when the scene is simple and the main goal is look, not timing.

For example, if you want a cinematic moment, you can often get there with a clean text prompt:

A well-groomed hotel concierge in a dark green vintage uniform and matching pillbox hat stands behind a polished wooden reception desk in a luxurious old-world hotel. He looks directly at the camera with a subtle amused smile, lifts a cookie to his mouth, takes a bite, chews naturally, and reacts with a quiet satisfied expression as a few crumbs fall onto the desk. Static medium shot, centered framing, warm amber lighting, rich wood-paneled background, shallow depth of field, cinematic realism, natural hand movement, realistic facial expressions, stable costume details, clean elegant atmosphere.

If you want a product mood test, you might write:

A matte silver smartwatch rests on a concrete surface as light passes slowly across the screen. Tight close-up, shallow depth of field, minimalist studio look. Subtle electronic hum.

If you want an abstract short scene, you can keep it even simpler:

A paper lantern floats through a dark room filled with dust and soft amber light. The camera follows slowly from behind. Dreamlike, quiet, intimate.

These work because they stay focused. One subject. One action. One dominant visual idea.

Text-only prompting starts to fail when you ask it for precise timing, complex choreography, or repeatable camera behavior. That is the moment to bring in references.

How To Prompt With a Reference Video

When a reference clip is involved, your prompt should get shorter, not longer.

The clip is already carrying motion information. Your text should support it, not compete with it.

The best things to specify in text are the style capsule, the subject identity, the camera intent, and any pace anchors that matter.

The style capsule is just a compact phrase that tells the model what visual treatment to apply. Something like “soft daylight, warm skin tones, shallow depth of field” is enough. You do not need a stack of adjectives.

Subject identity should focus on stable characteristics. If the clip is guiding a product rotation, the prompt should reinforce what the product is, what material it has, and what must stay recognizable. If the clip is guiding a person’s movement, the prompt should keep the subject description consistent without overloading it.

Camera intent still matters even when the reference contains the camera move. If the clip has a pan, say so. That tells the model the movement is intentional, not accidental shake.

Pace anchors are useful when the ending matters. A phrase like “hold on the final pose briefly” can help stop the model from rushing the last beat.

What should you leave out? The exact motion path. The tiny rhythm details. The frame-by-frame shape of the movement. That is what the clip is already doing.

A good reference-driven prompt might look like this:

Use the reference clip for the slow push-in and hand movement. Keep the ceramic mug shape and handle proportion stable. Replace the original setting with a warm kitchen counter in soft morning light. Neutral palette, clean reflections, natural steam. Hold the final frame slightly.

That is far more effective than trying to describe every millisecond in text.

Better Reference Clips Usually Matter More Than Better Wording

A weak reference clip can sabotage a strong prompt. A strong reference clip can rescue a simple one.

The best clips are usually short, focused, and clean.

In practice, three to eight seconds is often the most useful range. Shorter clips can feel too vague. Longer clips often contain too many competing priorities.

Try to keep the clip to one shot and one idea. If the subject is moving, do not also introduce an unnecessary camera swing unless that movement is the whole point. If the camera move is what matters, simplify the subject action.

Clean backgrounds help. Stable lighting helps. A readable silhouette helps. The model has a much easier time extracting usable motion from a clear clip than from a cluttered one.

Compression matters too. Muddy screen recordings, flicker, and blocky artifacts can leak directly into the generation. If the clip looks messy, the output often will too.

Here’s a good example of what a good reference video should look like:

The easiest test is this: if a human viewer cannot quickly tell what the reference clip is trying to demonstrate, the model probably cannot either.

Prompt Seedance 2.0 Like a Director

Camera language is one of the fastest ways to improve Seedance 2.0 ai video outputs.

A lot of prompts say “cinematic camera movement” as if that means something precise. It doesn’t. The model responds better when the move is concrete.

-

A tracking shot moves with the subject

-

A pull-back reveals space

-

A pan rotates horizontally in place

-

A tilt moves vertically

-

An orbit circles the subject

-

Handheld adds energy and instability

-

A gimbal move feels smoother and more polished

-

A POV shot changes the whole emotional relationship between viewer and scene

Shot size matters too:

-

Wide shots work best when the environment matters

-

Medium shots are usually the safest choice for people, dialogue, and creator-style content

-

Close-ups work best when detail and emotion matter, but they become fragile if you overload them with too much movement

In most cases, one clean camera move is better than several competing ones. “Medium shot, slow push-in” is stronger than “close-up with pan, tilt, subtle orbit, handheld energy, and dynamic cinematic movement.” The second prompt sounds sophisticated, but it is often less controllable.

If you want better outputs, always use camera language the way a director would use it: deliberately and sparingly.

Audio is Part of The Prompt, Not a Patch Later

One of the most useful things about Seedance 2.0 AI video generation is that sound can be part of the generation logic itself.

If a product hits a surface, describe the sound. If a street scene should feel humid and busy, describe the ambient layer. If a landscape should feel distant and quiet, say that too. Sound cues help define the experience, not just the image.

A prompt like “A bottle drops into ice” will generate one kind of result. Instead, say it like this:

A chilled bottle drops into crushed ice with a sharp clink and scattered crackle, cold vapor rising as the camera pushes closer.

This gives the model a lot more to work with.

Dialogue can be useful too, especially for short lines. But if the project depends heavily on lip-sync quality, it is worth testing before committing to a larger workflow. Sound effects and ambience often hold up more reliably than dialogue-heavy scenes.

The main rule is simple. If sound is part of the idea, put it in the prompt.

Multi-Shot Prompting Needs Structure

If you want Seedance 2.0 to generate a short sequence instead of a single shot, structure matters even more.

Do not write one long paragraph and hope the model finds the cuts.

Label the shots clearly. Give each shot one main action and one main camera instruction. If you try to cram four or five scene changes into a tiny duration, the model will compress, skip, or blur them together.

A clean multi-shot prompt might look like this in principle:

-

Shot one establishes the object

-

Shot two shows the key transformation

-

Shot three lands the reveal

That is usually enough to feel cinematic without creating too much instability.

For example:

Shot 1: Extreme close-up of a black sneaker hitting wet pavement, droplets splashing outward. Low angle, fast shutter feel. Shot 2: Medium tracking shot as the runner moves through a neon alley, breath visible in the cold air. Shot 3: Tight push-in on the shoe logo as he stops under a red street light. Tense urban mood, cold blue palette, distant traffic and wet footsteps.

That works because the sequence is doing one thing at a time.

The Mistakes That Keep Ruining Good Prompts

The most common Seedance 2.0 prompting mistakes are small workflow errors that compound quickly.

One is asking text to solve motion problems that should be handled by a reference clip. Another is uploading too many references and expecting the model to interpret them intelligently without role assignments.

Overloading style adjectives is another frequent issue. More description does not always create more control. Often it just forces the model to satisfy competing visual instructions while dropping motion quality.

Conflicting camera language causes problems too. So does trying to cram multiple actions into a single shot. So does using low-quality references. So does treating the first generation as final.

The simplest way to avoid most of these problems is to reduce complexity. Keep the idea narrow. Keep the motion clean. Keep the camera specific. Keep the references purposeful.

How To Fix Weak Outputs

When something goes wrong, do not rewrite everything at once.

One rule worth knowing: Seedance 2.0 does not like negative prompts.

You cannot tell it "no blur, no warping, no shaky camera" and expect those exclusions to work. The model does not process negations the way a search engine does.

Instead, rephrase what you want as a positive instruction. Not "no distortion" but "stable face, natural proportions, clean edges."

Not "no shaky camera" but "smooth gimbal motion, locked framing."

This sounds like a small distinction.

It is not. It is the difference between an instruction the model can act on and one it quietly ignores.

Once you have that in mind, here is how to actually debug the output.

1. If the motion feels jittery, start with the reference clip. Trim it tighter. Remove messy frames at the beginning or end. Simplify the background. Reduce style clutter in the prompt. Motion usually improves when the instruction becomes cleaner.

2. If the camera move gets ignored, the reference may be too subtle or too crowded. Use a stronger clip, reduce competing subject motion, and restate the move clearly in text.

3. If the style drifts, shorten the style language. Use one or two stronger anchors instead of five descriptive flourishes. If needed, add a still image for look and keep the motion clip focused on movement only.

4. If character identity breaks, simplify the scene and give the subject a stronger image reference. Identity is easier to preserve in cleaner shots than in crowded, highly dynamic scenes.

The key is to diagnose the actual problem. Do not treat every failure like a prompt-writing issue. Sometimes the prompt is fine and the reference is weak. Sometimes the references are fine and the prompt is overdesigned.

How to Use Seedance 2.0 More Efficiently

The best Seedance workflow is iterative.

Start with a shorter test generation before you attempt a full sequence.

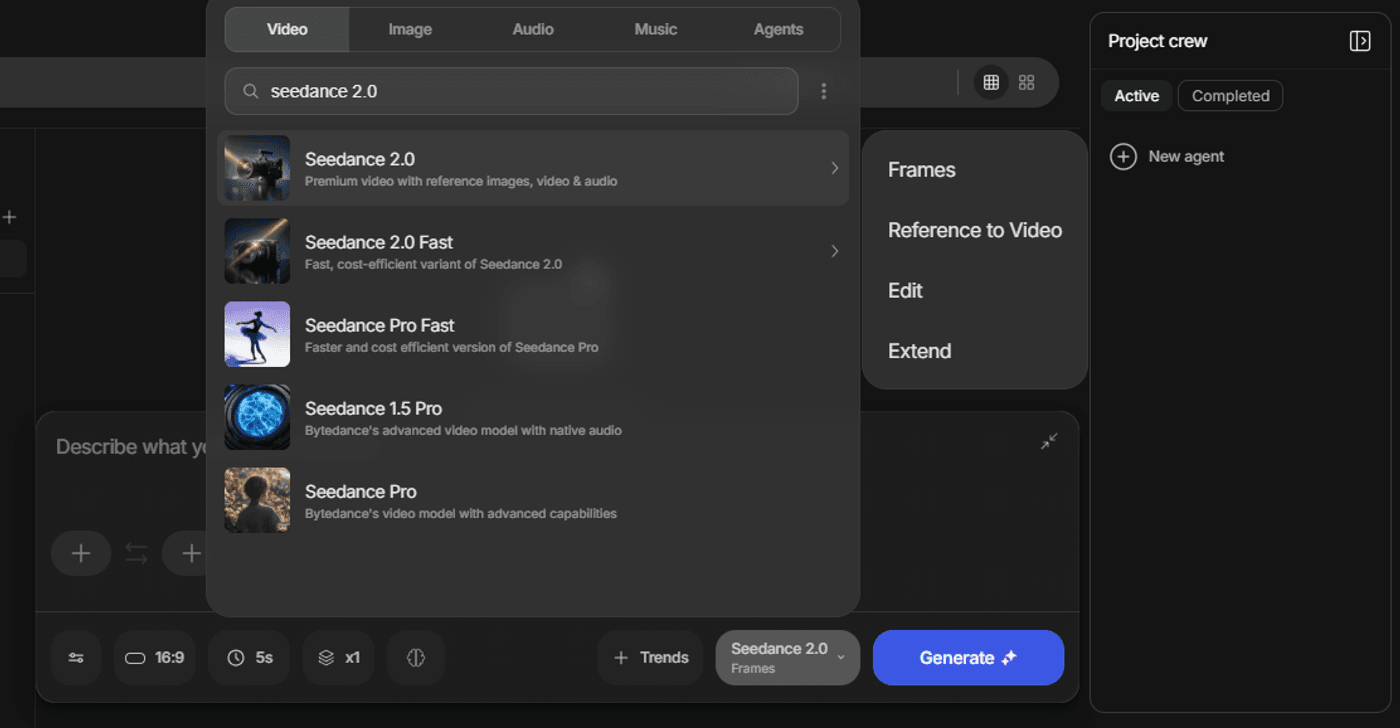

With invideo, if you are still testing prompt structure, references, or camera direction, use Seedance 2.0 Fast to explore quickly. Once the subject, motion, and overall scene logic feel right, move to the full Seedance 2.0 model for the stronger final render.

-

Lock the subject and motion first

-

Then refine style

-

Then generate alternates

Always save the prompts that work and reuse strong reference patterns instead of starting from zero every time.

This is especially important if you are making ads, short-form content, or repeated branded formats. In those workflows, consistency matters more than novelty. A repeatable system beats a lucky render.

That is the real answer to how to use Seedance 2.0 well. Do not think of it as a machine that rewards poetic prompts. Think of it as a system that rewards clear direction.

A Quick Note on Rights and Consent

If you are using reference images, videos, or audio, make sure you actually have the right to use them.

That means getting consent from people in frame, avoiding unlicensed music, avoiding visible third-party logos or artwork when possible, and keeping records for important assets. If a reference clip comes from someone else, “found online” is not the same as licensed.

This part is not creative, but it matters. Good workflows are not just effective. They are usable.

Final Takeaway

The best Seedance 2.0 prompts are not the longest prompts. They are the clearest ones.

Text should define the look of the world. Images should hold identity and appearance. Video references should carry timing and motion. Audio should shape rhythm and sound. Once each input has a clear role, Seedance 2.0 stops feeling unpredictable and starts feeling much more directable.

If you approach it that way, Seedance 2.0 AI video generation becomes far more practical. You are no longer trying to persuade the model with adjectives. You are giving it a structured set of instructions.

That is the real shift. Better prompting is not about sounding smarter. It is about giving the model better control signals.

If you want to use Seedance 2.0 in a more complete workflow, you can do that inside invideo, where you can move from generation to iteration, editing, and export in one place.

FAQs

-

1.

What is Seedance 2.0?

Seedance 2.0 is a multimodal AI video model that can work from text, images, video references, and audio together. Its main advantage is that it gives creators more control over consistency, motion, camera behavior, and sound than a text-only workflow.

-

2.

How do you use Seedance 2.0?

The practical answer is to choose the right workflow first, then prompt with structure. Use text to define the subject, action, scene, camera, style, and audio. Add image references when identity or appearance needs to stay stable. Add video references when timing or motion matters. Then iterate based on what actually failed in the output.

-

3.

Is Seedance 2.0 better with text prompts or reference videos?

Neither is universally better. They solve different problems. Text is better for visual direction, mood, setting, and style. Reference video is better for timing, motion, pacing, gesture rhythm, and camera curves. The strongest results usually come from using both with clear roles.

-

4.

Can Seedance 2.0 generate audio too?

Yes, Seedance 2.0 can generate sound as part of the output. That includes ambience, sound effects, music feel, and some dialogue use cases. In practice, audio direction works best when you describe the sound clearly instead of leaving it implied.

-

5.

What is the best prompt structure for Seedance 2.0 AI video generation?

A strong structure is: subject, action, scene, camera, style, audio, and constraints. That keeps the prompt readable while making sure each important part of the generation is covered.

-

6.

How can I improve consistency in Seedance 2.0 AI video outputs?

Use fewer but better references. Keep shots simpler. Use images to lock identity, video to lock motion, and text to lock style. Avoid overloading the prompt with too many adjectives or too many actions in one shot. Most importantly, debug the real issue instead of rewriting everything at once.