Key Takeaways

-

Wan 2.7 is Alibaba's latest AI video model, released in April 2026 by Tongyi Lab.

-

Its standout feature is Thinking Mode, which helps the model interpret and plan your prompt before generation begins.

-

Wan 2.7 supports native audio sync, first and last frame control, multi-reference consistency, and instruction-based editing.

-

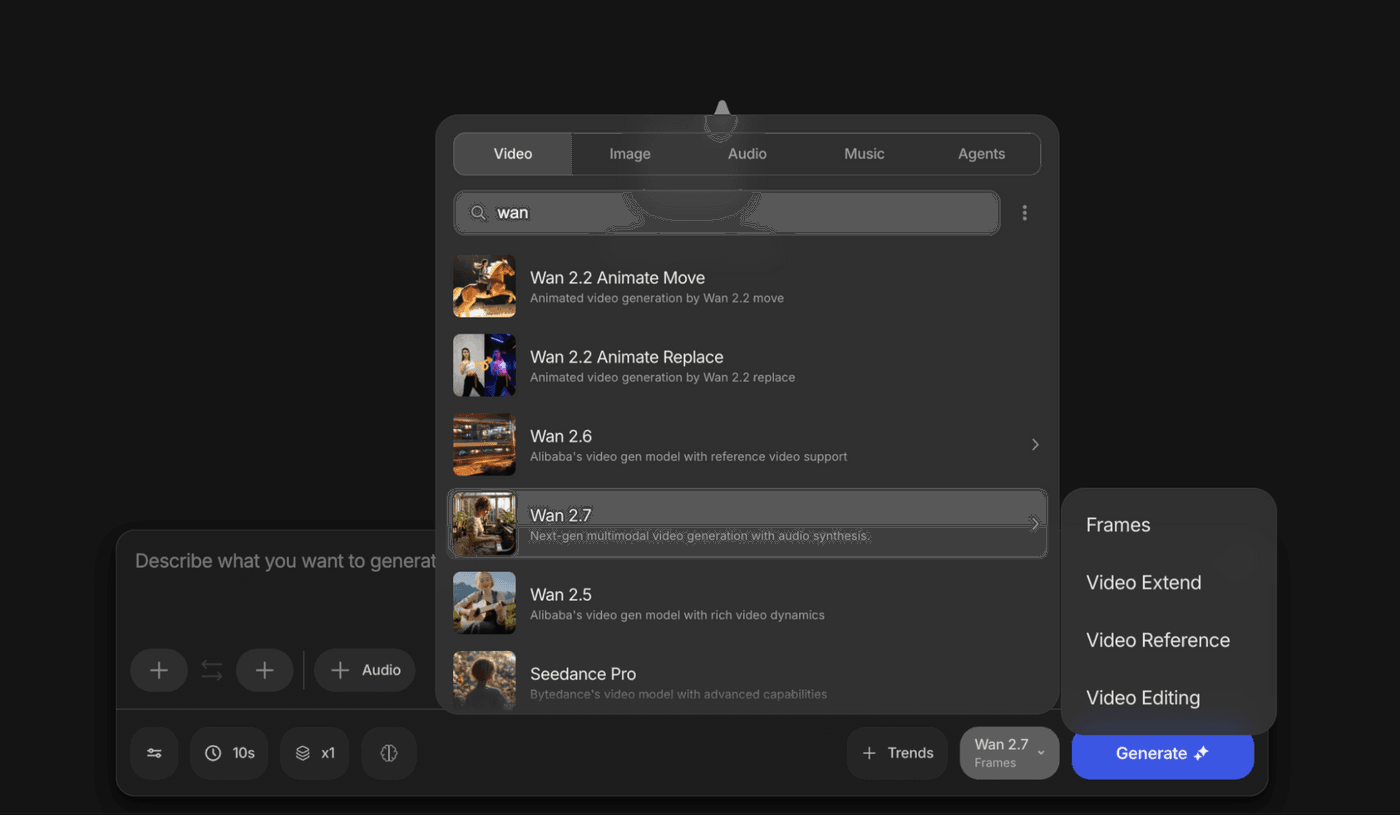

In invideo, you can access Wan 2.7 within Agents and Models.

-

Wan 2.7 includes four video workflows: Frames, Video Extend, Video Reference, and Video Editing.

-

Wan 2.7 is also available for image generation in invideo, with features like Thinking Mode, 4K output, color palette control, Thousand-Face Realism, superior text rendering, and up to 9 image references.

Most AI video tools move too fast.

You type a prompt, hit generate, and watch the model rush into creation before it fully understands what you want. The result might look impressive for a second, but once you look closer, the cracks show. The composition feels generic. The motion drifts. The scene loses the intent you started with.

Wan 2.7 takes a more deliberate approach.

Before it generates a frame, it interprets your prompt, plans the scene, and builds a stronger structural understanding of what you are trying to create. That changes the experience for you as a creator. You get more control, more consistency, and more room to shape the final output with intent.

If you want an AI video model that feels more responsive to direction and more useful in a real production workflow, Wan 2.7 is worth understanding.

What Is Wan 2.7?

Wan 2.7 is a next-generation AI video and image generation model from Alibaba's Tongyi Lab. It is built for creators who want more control over how a video looks, moves, and holds together across shots.

Wan 2.7 is designed to support more deliberate creation. You can use it to generate from scratch, work from references, extend existing motion, or transform footage with instructions. That makes it more useful in real creative workflows where you are often iterating, refining, and building from an existing asset rather than starting over each time.

What makes the model especially interesting is the way it handles generation itself. Instead of treating your prompt like a trigger, it treats it more like a brief. It first works to understand the scene, then starts building it.

Why Wan 2.7 Feels Different

1. Thinking Mode gives your prompt more weight

Thinking Mode is the defining feature of Wan 2.7.

Instead of moving straight into generation, the model first interprets your prompt, plans the composition, and builds a structural understanding of the scene. That extra reasoning step can make a real difference in the result you get back.

If you write a prompt with clear direction around subject, setting, lighting, camera style, and mood, Wan 2.7 is designed to respond with more intent. That matters because the best AI video output usually comes from clarity, not volume. When the model understands what you mean, you spend less time correcting what it guessed.

2. Native audio sync creates stronger performance

Wan 2.7 supports native audio synchronization during generation.

If you provide an audio file, the model uses it as part of the generation process itself. That helps lip movement, body motion, and rhythm feel more connected to the performance from the start.

If you are creating dialogue-led scenes, presenter-style videos, or character-driven content, that can make the output feel more cohesive and more usable.

3.First and last frame control improves shot precision

Wan 2.7 also supports first and last frame control, which gives you more control over how motion begins and ends.

This is especially useful when you already know the visual beat you want at the start and the finish of a scene. Instead of hoping the model lands on a usable endpoint, you can guide the transition with more intention.

If you think in storyboards, sequences, or visual arcs, this makes the model far more practical.

4. Multi-reference consistency helps shots stay coherent

Wan 2.7 supports multiple references to help preserve subject identity and visual consistency across generations.

That matters when you want the same character, styling, or scene logic to carry across multiple outputs. Without that consistency, even a beautiful generation can break the moment you try to build a sequence.

For branded storytelling, recurring characters, and multi-shot content, this becomes especially valuable.

5. Instruction-based editing makes iteration easier

You can also use Wan 2.7 to edit an existing clip using plain-language instructions.

That means you can change the look, feel, or visual treatment of footage without rebuilding it from scratch. If you want to shift the lighting, update the style, alter scene elements, or explore alternate versions of the same asset, this workflow gives you a faster way to iterate.

That is useful whether you are a solo creator testing ideas or a team producing multiple campaign variants.

Wan 2.7 Workflows for Video

Wan 2.7 is more useful when you stop thinking of it as one model with one button.

Inside invideo, it gives you four distinct video workflows. Each one solves a different creative problem. If you pick the right mode at the start, you give yourself a much better chance of getting a strong result faster.

1. Frames

Use Frames when you want to control how a shot begins and ends

Frames is the mode to use when you want to guide Wan 2.7 with both the first frame and the last frame of a shot.

Instead of starting from a single image or prompt and leaving the ending open, you define the opening visual and the destination. Wan 2.7 then generates the motion between those two anchor points. That gives you more control over pacing, continuity, and scene direction.

This mode works well when the visual transition itself matters. If you are creating a product reveal, a cinematic transformation, a before-and-after sequence, or a scene that needs to land on a specific final image, Frames gives you a more deliberate workflow.

To get better results, keep your first and last frames aligned in aspect ratio, subject placement, and lighting. The more visually connected those anchors are, the more coherent the motion tends to feel.

2. Video Extend

Use Video Extend when you want more from a clip that is already working

Video Extend is the mode to choose when you already have a clip and want to continue it naturally.

This is especially useful when your current shot has the right motion, mood, or subject, but it ends too early. Instead of rebuilding the scene from zero, you can extend it and carry the action forward.

That makes this mode practical for storytelling, ad sequences, and cinematic clips where you want to hold the moment a little longer or create a smoother continuation into the next beat.

If you already like the direction of the clip, Video Extend helps you build on that momentum instead of replacing it.

3. Video Reference

Use Video Reference when identity and consistency matter most

Video Reference is the right mode when you want Wan 2.7 to follow reference inputs more closely and preserve stronger visual consistency.

This is where reference-led creation becomes especially useful. You can guide the model with visual inputs that help it understand your subject more fully, including multi-angle references. In workflows where a 3x3 grid of up to nine images is used, Wan 2.7 can build a richer understanding of the subject across different views, which helps maintain more stable character identity and appearance throughout the generated video.

That matters when you are working with recurring characters, branded visuals, stylized subjects, or any scene where drift would weaken the final output.

If your priority is not just creativity but continuity, Video Reference gives you a stronger starting point than a prompt alone.

4. Video Editing

Use Video Editing when you want to transform an existing clip

Video editing is the mode to use when you already have footage and want to change it with text instructions.

Instead of generating a new clip from scratch, you upload an existing video and tell Wan 2.7 what to change. You can use that workflow to alter the background, shift the lighting, adjust colors, update styling, or modify scene elements while keeping the base structure of the original clip.

This is one of the most practical ways to use Wan 2.7 because it fits how creative work actually happens. You often do not want to start over. You want to improve, adapt, or localize what already works.

If you are producing variants, refining a concept, or tailoring a video for a different audience, video editing is often the smartest place to start.

Wan 2.7 Image on invideo

Wan 2.7 is not just a video model on invideo. You can also use it to generate images.

That matters because creative workflows rarely stay in one format. You may need stills for concept development, ad creatives, storyboards, product visuals, thumbnails, campaign assets, or reference images before you ever create the final video. Wan 2.7 Image helps you do that in the same broader ecosystem.

1. Thinking Mode for images: Wan 2.7 Image brings the same core idea that makes the video model compelling. It does not just react to your prompt. It parses the prompt, plans the composition, works through subject placement and lighting, and then generates the image with more structure. That gives you a better chance of getting an output that feels intentional rather than random. If you care about composition and visual clarity, this matters.

2. 4K image output: Wan 2.7 Image supports image generation up to 4K resolution. That makes it useful for more than quick mockups. You can use it for high-resolution campaign assets, product visuals, thumbnails, social creatives, presentations, and other use cases where quality needs to hold up beyond a small preview.

3. Color palette control: If brand consistency matters, this feature stands out. Wan 2.7 Image lets you define exact HEX color codes and control palette direction more precisely. That gives you a better way to create visuals that stay closer to your brand system, product palette, or campaign art direction. If you are designing for ads, product marketing, or branded storytelling, this can save you time in revision.

4. Thousand-Face Realism: Wan 2.7 Image also supports more detailed facial control. That helps you create faces that feel more distinct instead of falling into the familiar AI pattern where different characters start to look like variations of the same person. If you need uniqueness across portraits, characters, or campaign visuals, this is a meaningful strength.

5. Superior text rendering: Text is where many image models still struggle. Wan 2.7 Image is built to handle longer, more legible text across multiple languages, which makes it more useful for signs, labels, packaging concepts, poster-like layouts, and other visuals where readable text is part of the composition.

6. 9-image reference input: Wan 2.7 Image can also work with up to nine reference images in a single generation or editing request. That gives the model more context to work from, which can help it stay more aligned to a visual style, subject, or overall creative direction. If you need consistency across a campaign or want tighter control over the look of the output, this reference depth gives you more leverage.

You can access Wan 2.7 inside Agents and Models in invideo.

If you want to create video, go to the Video tab and select Wan 2.7. From there, you can choose the mode that fits your workflow: Frames, Video Extend, Video Reference, or Video Editing.

If you want to create stills, go to the Image tab and select Wan 2.7 Image. That gives you access to Wan 2.7's image generation capabilities, including Thinking Mode, 4K output, color palette control, and multi-image reference support.

How to Get Better Results with Wan 2.7

1. Write like a director: Wan 2.7 rewards specificity. Give it the subject, action, setting, lighting, camera style, and mood. The more clearly you define the shot, the more useful the output tends to be.

2. Match the mode to the job: A lot of weak AI output comes from using the wrong workflow. If you need to continue a clip, do not restart from scratch. If you need consistency, do not rely on a loose prompt alone. Start from the mode that matches your goal.

3. Treat the first output as a draft: The strongest results usually come from refinement. Review what worked, tighten your input, adjust references, and iterate with intent.

Wan 2.7 is a strong fit if you want more control over the creative process.

If you are a solo creator, it gives you tools that help your work feel more deliberate and more polished. If you are part of a creative team, it gives you multiple ways to generate, extend, reference, and edit without forcing everything into one workflow. If you care about consistency, structure, and prompt quality, it gives you more room to create with precision.

Wan 2.7 For the Win

Wan 2.7 stands out because it gives you more than just a generation. It gives you usable creative pathways.

That is what makes the four invideo modes so important. Frames help you create. Video Extend helps you continue. Video Reference helps you stay aligned. Video Editing helps you refine. Together, they make Wan 2.7 feel less like a single feature and more like a flexible video creation system.

If you want an AI model that supports deliberate creation, not just fast output, Wan 2.7 is worth exploring.

You can access it within ‘Agents and Models’ in invideo, and for the exact product flow, you can check the invideo landing page skill knowledgebase.

FAQs

-

1.

What is Wan 2.7?

Wan 2.7 is an AI video generation model from Alibaba's Tongyi Lab. It supports text-to-video, image-to-video, reference-based generation, and instruction-based editing.

-

2.

How do I access Wan 2.7?

Go to the Agents and Models tab at the bottom of the invideo dashboard. Select Generative Models and you will find the Wan model lineup there. The advantage of working in invideo is that you can combine Wan 2.7 outputs with other models, VFX tools, and caption presets all within the same project, without switching between platforms.

-

3.

Wan 2.7 vs Kling 3.0: which one should I use?

Depends on what you're making. Kling 3.0 is easier out of the box. Wan 2.7 rewards more work from your side, but the payoff is better multi-shot control, stronger character consistency across different angles, and the ability to edit existing footage through text instructions. If you're building a storyboard-driven sequence or need precise compositional control, Wan 2.7 is the better fit. For quick, single-shot social content, either works fine.

-

4.

Can Wan 2.7 keep the same character consistent across multiple shots?

Yes, and this is one of its strongest features. You can feed up to five reference images or videos into a generation, and the model uses them to maintain consistent subject identity across different shots and camera angles. You don't have to re-upload references for every new shot. It's not flawless on very dramatic lighting changes, but for standard multi-angle sequences, the consistency holds up well enough for production use.